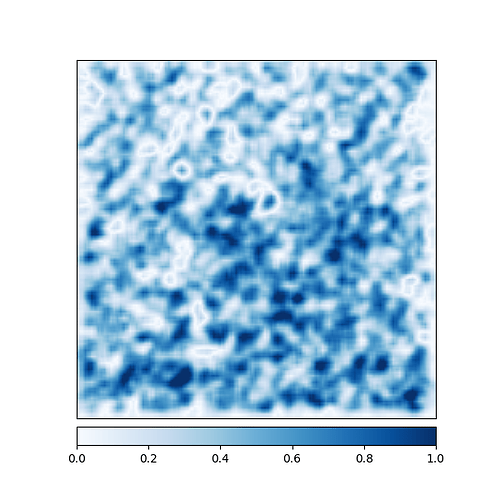

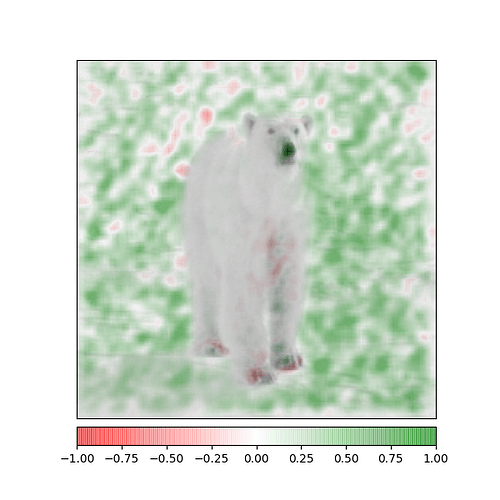

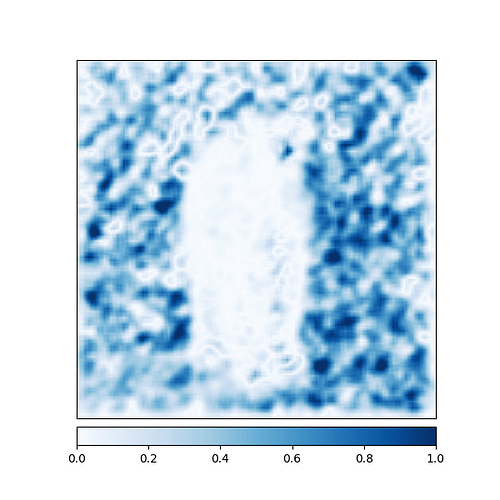

Hi, I am currently training a CNN to binarily classify images of polar bears. After only a few epochs it classifies about 80% of my positive examples correctly, even in my validation set. However, if I show the model a blank white image after training, it outputs with high certainty that the image belongs in the polar bear class, which leads me to the conclusion, that it possibly predicts the output based on the amount of white pixels in the input image. I thought about adding a lot of white images as negative examples to my data, but im not sure if this would be the right approach.

My model looks like this:

class Net(torch.nn.Module):

def init(self):

super(Net,self).init()

self.conv1=nn.Conv2d(3,6,3,1)

self.conv2=nn.Conv2d(6,16,3,1)

self.dropout1=nn.Dropout2d(0.4)

self.dropout2=nn.Dropout2d(0.4)

self.fc1=nn.Linear(46656,64)

self.fc2=nn.Linear(64,1)

#self.fc3=nn.Linear(2000,128)

#self.fc4=nn.Linear(128,1)

def forward(self,x):

CELU=torch.nn.CELU()

Tanh=torch.nn.Tanh()

x = self.conv1(x)

x = self.dropout1(x)

x = CELU(x)

x=F.max_pool2d(x,2)

x = self.conv2(x)

x = CELU(x)

x = F.max_pool2d(x, 2)

x = torch.flatten(x, 1)

x = CELU(x)

x = self.fc1(x)

x = CELU(x)

x = self.dropout2(x)

x = self.fc2(x)

output = torch.sigmoid(x)

return output

return(x)