Hi!

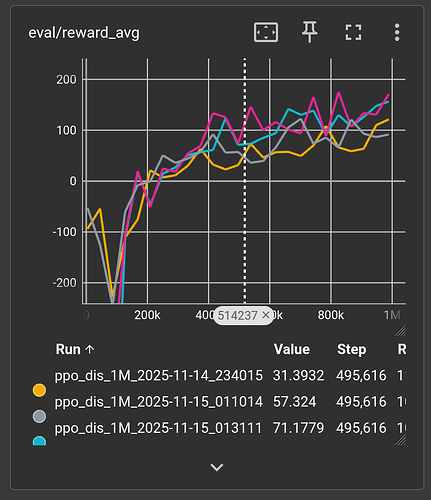

I am using PPO to try to learn LunarLander-v3 in torchrl. I am having good results (avg reward > 280) using stable-baselines3 (sb3) with less than 1M steps. The thing is, I have tried a bunch of different things listed thereafter but the performance seems to be on average poor-ish (~150) in torchrl and never quite as high as sb3. Worse, it seems some updates made make the policy regress compared to what it was in the past (going back to episode length 1000 => lander is just hovering again instead of trying to land). There is in both cases exploration but I do not expect the exploration to degrade the current solution that much.

So, I started off the torchRL version off the python notebook related to double inverted pendulum found on this page.

Here is a couple of things I have changed compared to the example:

- Set all hyperparams the same as sb3

- Use the same models for both value and policy (MLP of 3 layers, 16 wide, same tanh activation) as sb3

- Set the policy as

ProbabilisticActor

policy_module = ProbabilisticActor( module=policy_module, spec=single_env.action_spec, in_keys=["logits"], distribution_class=OneHotCategorical, return_log_prob=True ) - Removed the annealing scheduler

- Evaluate on parallel env

I have attached several screenshots to compare both version results and full code for LunarLander-v3

Here are a few things I have tried without any luck:

- Took the trained VecNormalize output of sb3 to replace loc and scale tensors after

single_env.transform[0].init_stats(num_iter=10000, reduce_dim=0, cat_dim=0). It learns absolutly nothing and gets stuck with reward 0. - Changing the norm from

smooth_l1tol2but the critic values are even higher than with smooth_l1 (which seem to be already quite large 16 at t0 then ~1-2 on avg) - Increase both

frames_per_batchandsub_batch_sizeto have more stable grads / learning

Here are some differences between torchrl and sb3:

- Original torchrl notebook has a replay buffer, sb3 does not

- sb3 has 8 training envs and 8 evals envs, torchrl has 1 training, 8 for eval. I was not confident yet modifying original code to add vec_env given the problem stated here.

- [EDIT] I believe the env is normalized just during the beginning where in sb3 it is constantly updating

Questions:

-Could it be because I am using OneHotCaterogical and only one thruster is on ?

-Do you have any idea of what could be wrong here ?

Finally, note that I have a solid experience developing code on computers, and I am not very familiar with RL theory and training models. It may be something obvious.

Thanks in advance !

Full code

#!/usr/bin/env python3

import torch

import warnings

warnings.filterwarnings("ignore")

from collections import defaultdict

from datetime import datetime

from os import environ

from tensordict.nn import TensorDictModule

from tensordict.nn.distributions import NormalParamExtractor

from torch import multiprocessing, nn, tensor

from torch.distributions.categorical import Categorical as TorchCategorical

from torch.utils.tensorboard import SummaryWriter

from torchrl.collectors import SyncDataCollector

from torchrl.data.replay_buffers import ReplayBuffer

from torchrl.data.replay_buffers.samplers import SamplerWithoutReplacement

from torchrl.data.replay_buffers.storages import LazyTensorStorage

from torchrl.envs import (Compose, DoubleToFloat, EnvCreator, ObservationNorm, ParallelEnv, StepCounter, TransformedEnv, step_mdp)

from torchrl.envs.libs.gym import GymEnv

from torchrl.envs.utils import check_env_specs, ExplorationType, set_exploration_type

from torchrl.modules import OneHotCategorical, ProbabilisticActor, TanhNormal, ValueOperator

from torchrl.objectives import ClipPPOLoss

from torchrl.objectives.value import GAE

from tqdm import tqdm

if __name__ == "__main__":

device = "cpu"

num_cells = 16 # number of cells in each layer i.e. output dim.

lr = 3e-4

max_grad_norm = 0.5

p_env_count = 8

frames_per_batch = 2048

# For a complete training, bring the number of frames up to 1M

total_frames = 1_000_000

sub_batch_size = 64 # cardinality of the sub-samples gathered from the current data in the inner loop

num_epochs = 10 # optimization steps per batch of data collected

clip_epsilon = (

0.2 # clip value for PPO loss: see the equation in the intro for more context.

)

gamma = 0.99

lmbda = 0.95

entropy_eps = 1e-3

env_name = "LunarLander-v3"

single_env = TransformedEnv(

GymEnv("LunarLander-v3", device=device, render_mode="rgb_array"),

Compose(

# normalize observations

ObservationNorm(in_keys=["observation"]),

DoubleToFloat(),

StepCounter(),

),

)

parallel_env = TransformedEnv(

ParallelEnv(p_env_count, EnvCreator(lambda: GymEnv(env_name, device=device))),

Compose(

# normalize observations

ObservationNorm(in_keys=["observation"]),

DoubleToFloat(),

StepCounter(),

),

)

single_env.transform[0].init_stats(num_iter=10000, reduce_dim=0, cat_dim=0)

parallel_env.transform[0].loc = single_env.transform[0].loc.repeat(p_env_count, 1)

parallel_env.transform[0].scale = single_env.transform[0].scale.repeat(p_env_count, 1)

check_env_specs(single_env)

check_env_specs(parallel_env)

actor_net = nn.Sequential(

nn.LazyLinear(num_cells, device=device),

nn.Tanh(),

nn.LazyLinear(num_cells, device=device),

nn.Tanh(),

nn.LazyLinear(num_cells, device=device),

nn.Tanh(),

nn.LazyLinear(single_env.action_spec.space.n, device=device)

)

policy_module = TensorDictModule(actor_net, in_keys=["observation"], out_keys=["logits"])

policy_module = ProbabilisticActor(

module=policy_module,

spec=single_env.action_spec,

in_keys=["logits"],

distribution_class=OneHotCategorical,

return_log_prob=True # Return log probability of sampled actions (required for PPO loss)

)

value_net = nn.Sequential(

nn.LazyLinear(num_cells, device=device),

nn.Tanh(),

nn.LazyLinear(num_cells, device=device),

nn.Tanh(),

nn.LazyLinear(num_cells, device=device),

nn.Tanh(),

nn.LazyLinear(1, device=device),

)

value_module = ValueOperator(

module=value_net,

in_keys=["observation"],

)

policy_module(single_env.reset())

value_module(single_env.reset())

collector = SyncDataCollector(

single_env,

policy_module,

frames_per_batch=frames_per_batch,

total_frames=total_frames,

split_trajs=False,

device=device,

)

replay_buffer = ReplayBuffer(

storage=LazyTensorStorage(max_size=frames_per_batch),

sampler=SamplerWithoutReplacement(),

)

advantage_module = GAE(gamma=gamma, lmbda=lmbda, value_network=value_module, average_gae=True, device=device)

loss_module = ClipPPOLoss(

actor_network=policy_module,

critic_network=value_module,

clip_epsilon=clip_epsilon,

entropy_bonus=bool(entropy_eps),

entropy_coeff=entropy_eps,

# these keys match by default but we set this for completeness

critic_coeff=0.5,

loss_critic_type="smooth_l1"

)

optim = torch.optim.Adam(loss_module.parameters(), lr)

timenow = str(datetime.now())[0:-7]

timenow = '_' + timenow.replace(' ', '_').replace(':','')

writepath = environ['HOME'] + '/ml_runs/trl_ppo_1M' + timenow

writer = SummaryWriter(log_dir=writepath)

total_steps = 0

mean_rewards = []

eval_reward_sums = []

desc_bar = tqdm(total=0, position=0, bar_format='{desc}')

pbar = tqdm(total=total_frames)

eval_str = ""

# We iterate over the collector until it reaches the total number of frames it was

# designed to collect:

for i, tensordict_data in enumerate(collector):

# we now have a batch of data to work with. Let's learn something from it.

for _ in range(num_epochs):

# We'll need an "advantage" signal to make PPO work.

# We re-compute it at each epoch as its value depends on the value

# network which is updated in the inner loop.

advantage_module(tensordict_data)

data_view = tensordict_data.reshape(-1)

replay_buffer.extend(data_view.cpu())

for _ in range(frames_per_batch // sub_batch_size):

subdata = replay_buffer.sample(sub_batch_size)

loss_vals = loss_module(subdata.to(device))

loss_value = (loss_vals["loss_objective"] + loss_vals["loss_critic"] + loss_vals["loss_entropy"])

# Optimization: backward, grad clipping and optimization step

loss_value.backward()

# this is not strictly mandatory but it's good practice to keep

# your gradient norm bounded

torch.nn.utils.clip_grad_norm_(loss_module.parameters(), max_grad_norm)

optim.step()

optim.zero_grad()

total_steps += tensordict_data.numel()

mean_rewards.append(tensordict_data["next", "reward"].mean().item())

ep_steps_max = tensordict_data["next","step_count"].max().item()

current_lr = optim.param_groups[0]["lr"]

writer.add_scalar('train/loss/objective', loss_vals["loss_objective"], global_step=total_steps)

writer.add_scalar('train/loss/critic', loss_vals["loss_critic"], global_step=total_steps)

writer.add_scalar('train/loss/entropy', loss_vals["loss_entropy"], global_step=total_steps)

writer.add_scalar('train/lr', current_lr, global_step=total_steps)

writer.add_scalar('train/episode_steps_max', ep_steps_max, global_step=total_steps)

writer.add_scalar('train/episode_steps_min', tensordict_data["step_count"].min().item(), global_step=total_steps)

# writer.add_scalar('episode_steps_avg', tensordict_data["step_count"].mean().item(), global_step=total_steps)

cum_reward_str = f" avg reward: {mean_rewards[-1]:4.2f} (init: {mean_rewards[0]:4.2f})"

stepcount_str = f"eps steps (max): {ep_steps_max}"

lr_str = f"lr policy: {current_lr: 4.4f}"

if i % 10 == 0:

# We evaluate the policy once every 10 batches of data.

# Evaluation is rather simple: execute the policy without exploration

# (take the expected value of the action distribution) for a given

# number of steps (1000, which is our ``env`` horizon).

# The ``rollout`` method of the ``env`` can take a policy as argument:

# it will then execute this policy at each step.

with set_exploration_type(ExplorationType.DETERMINISTIC), torch.no_grad():

# execute a rollout with the trained policy

eval_rollout = parallel_env.rollout(1000, policy_module)

eval_sums = eval_rollout["next", "reward"].sum(dim=1)

eval_sums_avg = eval_sums.mean().item()

eval_sums_std = eval_sums.std().item()

eval_sums_min = eval_sums.min().item()

eval_sums_max = eval_sums.max().item()

writer.add_scalar("eval/reward_avg", eval_sums_avg, global_step=total_steps)

writer.add_scalar("eval/reward_std", eval_sums_std, global_step=total_steps)

writer.add_scalar("eval/reward_min", eval_sums_min, global_step=total_steps)

writer.add_scalar("eval/reward_max", eval_sums_max, global_step=total_steps)

# writer.add_scalar("eval/steps_max", eval_steps_max, global_step=total_steps)

eval_str = f"eval avg: {eval_sums_avg: .1f} "

del eval_rollout

pbar.update(tensordict_data.numel())

desc_bar.set_description(",".join([eval_str, cum_reward_str, stepcount_str, lr_str]))

del single_env