Hello. I am working on this tutorial: TorchVision Object Detection Finetuning Tutorial — PyTorch Tutorials 2.2.0+cu121 documentation. I trained model and made predictions with py file below:

from PIL import Image

import torch

import numpy as np

from torchvision import transforms

from torch.autograd import Variable

import cv2

loader = transforms.Compose([transforms.ToTensor()])

def img_loader(img_path):

img = Image.open(img_path)

img = img.convert('RGB')

img = loader(img).float()

img = Variable(img, requires_grad=True)

return img.cuda()

path = r't4.png'

model = torch.load('network')

model.eval()

img_arr = img_loader(path)

with torch.no_grad():

pred = model([img_arr])

iter_num = len(pred[0]['boxes'])

img1 = cv2.imread(path)

#where I draw bboxes

for i in range(iter_num):

tl = (pred[0]['boxes'][i][0],pred[0]['boxes'][i][1])

br = (pred[0]['boxes'][i][2],pred[0]['boxes'][i][3])

img1 = cv2.rectangle(img1,tl,br, (0,0,255), 2)

output = Image.fromarray(pred[0]['masks'][0, 0].mul(255).byte().cpu().numpy())

print(output)

cv2_output = np.array(output)

cv2_output = cv2_output[:, :-1].copy()

cv2.imshow('out', img1)

cv2.imwrite('todiscuss.png', img1)

cv2.waitKey(0)

print('Done')

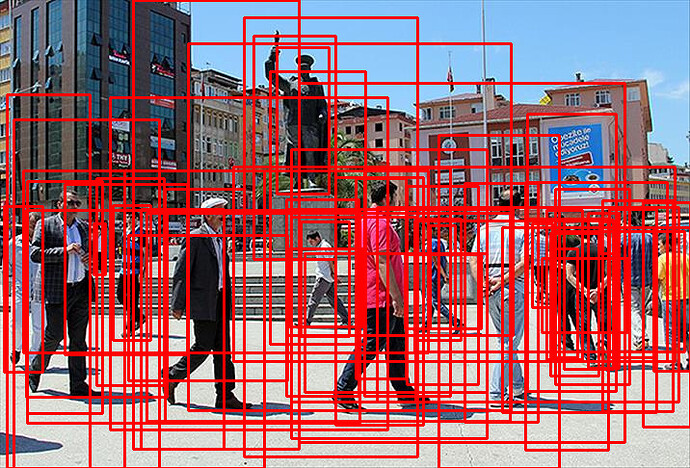

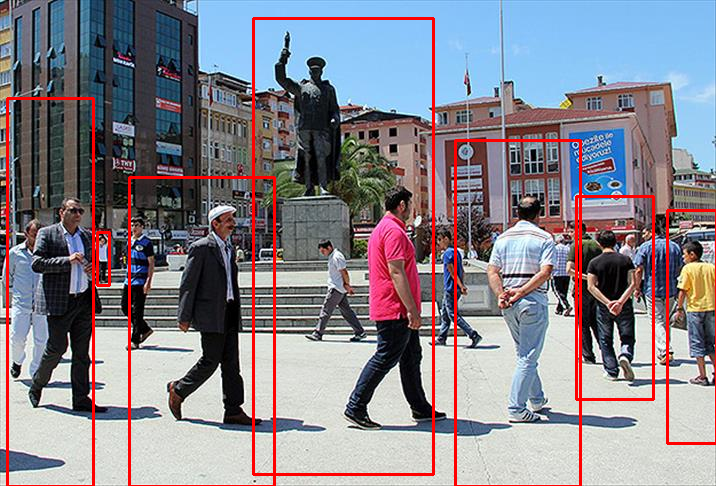

When I draw boundary boxes I got this result:

As it seen in image, pred = model(img) returns lots of bboxes. Is there any way to set a threshold for returned bboxes?