I build the pytorch repo locally (latest version dated:08/12/2025) to understand the working of upsampleTrilinear3d kernel, But When I am printing the values to verify my calculation with the kernel calculation using print statement, then the final output’s precision changes for float32 data type.

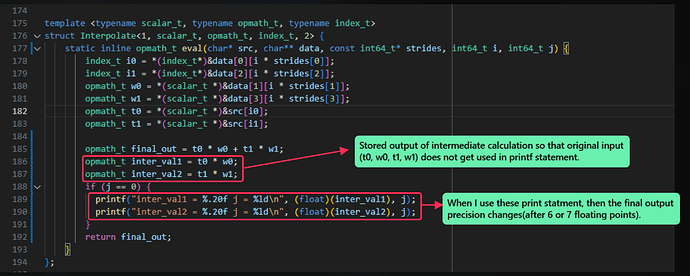

As can be seen in below image:

File path: pytorch/aten/src/ATen/native/cpu/UpSampleKernel.cpp at main · pytorch/pytorch · GitHub

Below are the build steps that i have used to build the pytorch repo:

- Environment setup:

source ~/pytorch_env/bin/activate

- set env flags:

export USE_CUDA=0

export USE_MKLDNN=0

export USE_DISTRIBUTED=0

export BUILD_TEST=0

export MAX_JOBS=$(nproc)

export CFLAGS="-O0 -g -frounding-math -fno-fast-math"

export CXXFLAGS="-O0 -g -frounding-math -fno-fast-math"

PYTHON_TORCH_DIR=$(python -c 'import torch, os; print(os.path.dirname(torch.__file__))')

INCDIR="${PYTHON_TORCH_DIR}/include"

APIDIR="${PYTHON_TORCH_DIR}/include/torch/csrc/api/include"

LIBDIR="${PYTHON_TORCH_DIR}/lib"

- build command:

python setup.py develop

- Added below test file:

#include <torch/torch.h>

#include <functional>

#include <iostream>

#include <random>

#include <vector>

#include <ATen/ATen.h>

#include <iostream>

int main() {

at::Tensor x = at::arange(12, at::TensorOptions().dtype(at::kFloat)).reshape({1,1,4,1,3});

float* input_ptr = x.data_ptr<float>();

int64_t input_ele = 12;

int64_t output_ele = 13 * 16 * 14;

std::cout.precision(20);

std::default_random_engine gen(42);

std::normal_distribution<float> fp_dis(0, 1);

for (int i = 0; i < input_ele; i++) {

input_ptr[i] = fp_dis(gen);

}

// Test Upsample_trilinear_3d

auto out = at::upsample_trilinear3d(

x,

at::IntArrayRef({13, 16, 14}), // <-- explicit

true // align_corners

);

float* output_ptr = out.data_ptr<float>();

printf("Output Tensor\n");

for (int i = 0; i < output_ele; i++) {

printf("%.20f, ", output_ptr[i]);

}

printf("\n");

return 0;

}

- test compile:

g++ -std=c++17 -O0 -g -frounding-math -fno-fast-math test_upsample.cpp -I"${INCDIR}" -I"${APIDIR}" -L"${LIBDIR}" -Wl,-rpath,"${LIBDIR}" -ltorch -ltorch_cpu -lc10 -o test_upsample -pthread

Doubts:

- Does MAC/FMA operation work when we use cpu to run the code?(Confirming this as multiply accumulate(MAC) is being performed in our calculation(as can be seen in above image)).

- How can the precision change by using the printf statement?

- Can environment flags change the precision?

Please reply if someone has faced this issue before and how did you resolve it.