ash_ds

May 10, 2023, 8:01am

1

i tried to download pytorch with cuda support however after checking

torch.cuda.is_available()

- false

i went to try this

python -m torch.utils.collect_env

which returned

Would like to know how to resolve the issue.

Thanks in advance.

have you actually installed the CUDA package? It seemed only cuDNN installed…

You don’t need to install a full CUDA toolkit as PyTorch ships with its own CUDA dependencies. The locally installed CUDA toolkit will be used if you build PyTorch from source or a custom extension. Could you post the full output of python -m torch utils.collect_env and pip list | grep torch instead of cropped screenshots?

ash_ds

May 10, 2023, 5:38pm

6

I am quite a new user to arch , would be helpful if you show the required steps to install perfectly

ash_ds

May 10, 2023, 5:40pm

7

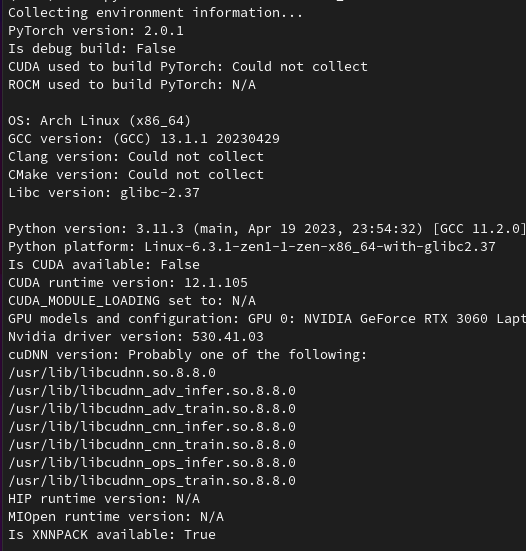

Collecting environment information...

PyTorch version: 2.0.1

Is debug build: False

CUDA used to build PyTorch: Could not collect

ROCM used to build PyTorch: N/A

OS: Arch Linux (x86_64)

GCC version: (GCC) 13.1.1 20230429

Clang version: Could not collect

CMake version: Could not collect

Libc version: glibc-2.37

Python version: 3.11.3 (main, Apr 19 2023, 23:54:32) [GCC 11.2.0] (64-bit runtime)

Python platform: Linux-6.3.1-zen1-1-zen-x86_64-with-glibc2.37

Is CUDA available: False

CUDA runtime version: 12.1.105

CUDA_MODULE_LOADING set to: N/A

GPU models and configuration: GPU 0: NVIDIA GeForce RTX 3060 Laptop GPU

Nvidia driver version: 530.41.03

cuDNN version: Probably one of the following:

/usr/lib/libcudnn.so.8.8.0

/usr/lib/libcudnn_adv_infer.so.8.8.0

/usr/lib/libcudnn_adv_train.so.8.8.0

/usr/lib/libcudnn_cnn_infer.so.8.8.0

/usr/lib/libcudnn_cnn_train.so.8.8.0

/usr/lib/libcudnn_ops_infer.so.8.8.0

/usr/lib/libcudnn_ops_train.so.8.8.0

HIP runtime version: N/A

MIOpen runtime version: N/A

Is XNNPACK available: True

CPU:

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Address sizes: 48 bits physical, 48 bits virtual

Byte Order: Little Endian

CPU(s): 16

On-line CPU(s) list: 0-15

Vendor ID: AuthenticAMD

Model name: AMD Ryzen 7 5800H with Radeon Graphics

CPU family: 25

Model: 80

Thread(s) per core: 2

Core(s) per socket: 8

Socket(s): 1

Stepping: 0

Frequency boost: enabled

CPU(s) scaling MHz: 71%

CPU max MHz: 4462.5000

CPU min MHz: 1200.0000

BogoMIPS: 6388.32

Flags: fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush mmx fxsr sse sse2 ht syscall nx mmxext fxsr_opt pdpe1gb rdtscp lm constant_tsc rep_good nopl nonstop_tsc cpuid extd_apicid aperfmperf rapl pni pclmulqdq monitor ssse3 fma cx16 sse4_1 sse4_2 movbe popcnt aes xsave avx f16c rdrand lahf_lm cmp_legacy svm extapic cr8_legacy abm sse4a misalignsse 3dnowprefetch osvw ibs skinit wdt tce topoext perfctr_core perfctr_nb bpext perfctr_llc mwaitx cpb cat_l3 cdp_l3 hw_pstate ssbd mba ibrs ibpb stibp vmmcall fsgsbase bmi1 avx2 smep bmi2 erms invpcid cqm rdt_a rdseed adx smap clflushopt clwb sha_ni xsaveopt xsavec xgetbv1 xsaves cqm_llc cqm_occup_llc cqm_mbm_total cqm_mbm_local clzero irperf xsaveerptr rdpru wbnoinvd cppc arat npt lbrv svm_lock nrip_save tsc_scale vmcb_clean flushbyasid decodeassists pausefilter pfthreshold avic v_vmsave_vmload vgif v_spec_ctrl umip pku ospke vaes vpclmulqdq rdpid overflow_recov succor smca fsrm

Virtualization: AMD-V

L1d cache: 256 KiB (8 instances)

L1i cache: 256 KiB (8 instances)

L2 cache: 4 MiB (8 instances)

L3 cache: 16 MiB (1 instance)

NUMA node(s): 1

NUMA node0 CPU(s): 0-15

Vulnerability Itlb multihit: Not affected

Vulnerability L1tf: Not affected

Vulnerability Mds: Not affected

Vulnerability Meltdown: Not affected

Vulnerability Mmio stale data: Not affected

Vulnerability Retbleed: Not affected

Vulnerability Spec store bypass: Mitigation; Speculative Store Bypass disabled via prctl

Vulnerability Spectre v1: Mitigation; usercopy/swapgs barriers and __user pointer sanitization

Vulnerability Spectre v2: Mitigation; Retpolines, IBPB conditional, IBRS_FW, STIBP always-on, RSB filling, PBRSB-eIBRS Not affected

Vulnerability Srbds: Not affected

Vulnerability Tsx async abort: Not affected

Versions of relevant libraries:

[pip3] numpy==1.24.3

[pip3] torch==2.0.1

[pip3] torchaudio==2.0.2

[pip3] torchvision==0.15.2

[conda] blas 1.0 mkl

[conda] mkl 2021.4.0 h06a4308_640

[conda] mkl-service 2.4.0 py311h5eee18b_0

[conda] mkl_fft 1.3.1 py311h30b3d60_0

[conda] mkl_random 1.2.2 py311hba01205_0

[conda] numpy 1.24.3 py311hc206e33_0

[conda] numpy-base 1.24.3 py311hfd5febd_0

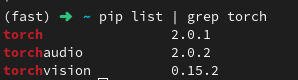

[conda] pytorch 2.0.1 py3.11_cpu_0 pytorch

[conda] pytorch-cuda 11.7 h778d358_5 pytorch

[conda] pytorch-mutex 1.0 cpu fastchan

[conda] torchaudio 2.0.2 py311_cpu pytorch

[conda] torchvision 0.15.2 py311_cpu pytorch

this is the output for python -m torch.utils.collect_env.

Thanks for the update. Based on:

[conda] pytorch 2.0.1 py3.11_cpu_0

You have installed the CPU-only packages and would need to install the ones coming with the CUDA dependencies.

ash_ds

May 11, 2023, 7:54am

9

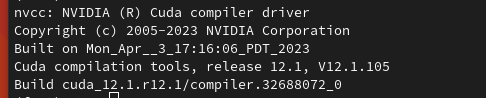

well i have a doubt though , cause my cuda version is 12.01 and the one present in pytorch is 11.8 or 11.7 , would there be an error.

No, there won’t be an error as described in my previous post:

You don’t need to install a full CUDA toolkit as PyTorch ships with its own CUDA dependencies. The locally installed CUDA toolkit will be used if you build PyTorch from source or a custom extension.

ash_ds

May 11, 2023, 8:08am

11

Thanks a lot, I created a new environment and installed it as you mentioned works right now.