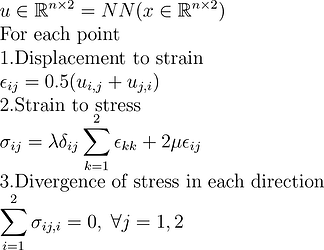

Suppose I have the displacement matrix containing n point-wise displacement

which comes from the coordinates

I’m trying to solve the linear elastic problem, with the equilibrium governing equations given as follows:

However, the Pytorch neural networks leads to very bad results, I was still suspecting I made some mistakes on calculating the gradient by torch.autograd function after checking my codes several times. Could anyone please give me some suggestions?

- For dislacement to strain, I have two functions:

def calculate_Jacobians(u,X):

J = []

_,d=u.shape

for i in range(d):

u_i = u[:, i]

grad = torch.autograd.grad(u_i, X,grad_outputs=torch.ones_like(u_i).to(u.device), create_graph=True)[0]

#print('grad:',grad)

J.append(grad)

return torch.stack(J, dim=1)

def u_to_e(u,X):

Jacobian=calculate_Jacobians(u,X)

strain=0.5*(Jacobian+torch.transpose(Jacobian,-1,-2))

return strain

The Jacobian is presented in a batch manner which has shape (n,2,2).

Hence, the strain is also a batch tensor (n,2,2)

- For strain to stress, I have three functions:

def batch_eye(x):

n,d=x.shape[:-1]

return torch.eye(d, d, dtype=x.dtype, device=x.device).unsqueeze(0).repeat(n, 1, 1)

def batch_trace(x):

return x.diagonal(offset=0, dim1=-1, dim2=-2).sum(-1,keepdim=True).unsqueeze(-1)

def e_to_sigma(strain,lamb,mu):

return lamb*batch_eye(strain)*batch_trace(strain)+2*mu*strain

And therefore, the stress is a batch tensor (n,2,2)

- the stress divergence

After obtaining the stress, I have to calculate the divergence of stress wrt to all directions

I have one function for this:

def point_wise_divergence(stress,X):

_,d=X.shape

div=[]

for i in range(d):

sigma_col=stress[:,:,i]

grad=torch.autograd.grad(sigma_col, X,grad_outputs=torch.ones_like(sigma_col), create_graph=True)[0]

div.append(grad)

Div_comp=torch.stack(div,dim=1)

Div=Div_comp.sum(-1)

return Div

For the displacement gradient, it’s easy to have an example problem from text books to verify my code, but I’m not sure I did it correctly for stress divergence.