Hello everyone,

I have the following issue regarding the use of functional interpolate in pytorch(my version is 1.7.1, running on Windows):

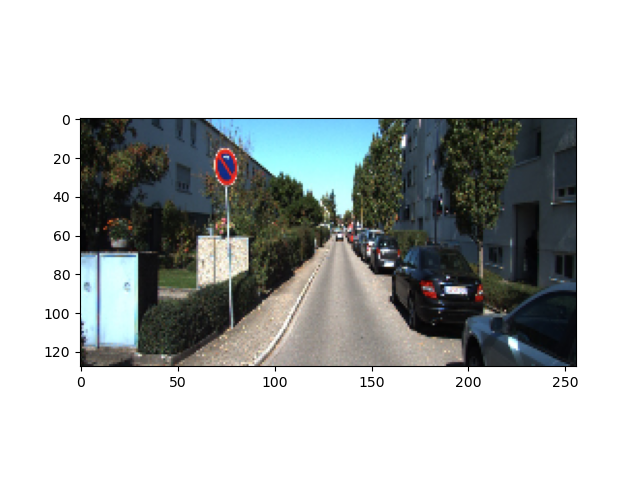

I want to downsample an image, on a scale factor of 2.

The tensor of the original has the shape: [1 x 3 x 128 x 256]

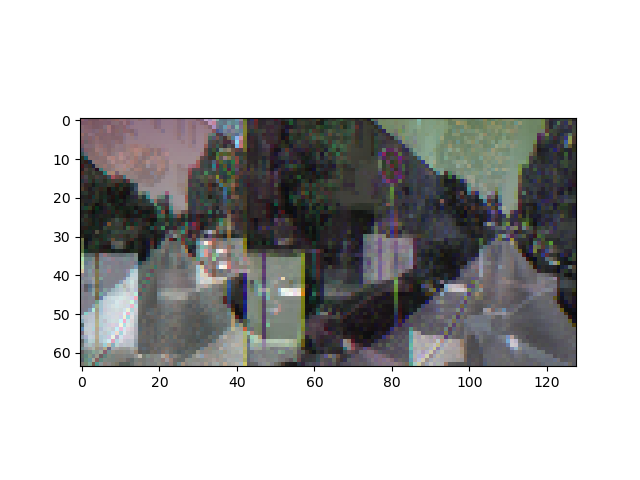

The result of the interpolate is the following:

The tensor of the downsampled image has expected shape: [1 x 3 x 64 x 128]

But the result seems all “mixed” up. It seems as its trying to draw each of the channels individually in the image.

The code that does this is as it follows(even changing the align_corners parameters isnt doing much):

def scaling_pyramid(self, img, num_scales):

scaled_imgs = []

s = img.size()

h = s[2]

w = s[3]

for i in range(num_scales):

ratio = 2 ** (i + 1)

nh = h // ratio

nw = w // ratio

print(img[0, :, :, :])

scaled_imgs.append(torch.nn.functional.interpolate(img, size=[nh, nw], mode="bilinear", align_corners=False))

print(scaled_imgs[i][0, :, :, :])

current_tensor = scaled_imgs[i][0, :, :, :]

print(current_tensor.shape)

show_scaling_image = current_tensor.resize_(current_tensor.shape[1], current_tensor.shape[2], current_tensor.shape[0]).cpu().numpy()

print(show_scaling_image.shape)

plot.imshow(show_scaling_image)

plot.show()

return scaled_imgs

Any hints / help will be greatly appreaciated!