I have two models: encoder and decoder, i want put these two model to cuda0, 1 and paralle use cuda

i just

self.device = torch.device("cuda")

self.models["encoder"] = networks.ResnetEncoder(

self.opt.num_layers, self.opt.weights_init == "pretrained")

self.models["encoder"].to(self.device)

self.models['encoder'] = nn.DataParallel(self.models['encoder'])

self.parameters_to_train += list(self.models["encoder"].parameters())

self.models["depth"] = networks.DepthDecoder(

self.models["encoder"].module.num_ch_enc, self.opt.scales)

self.models["depth"].to(self.device)

self.models["depth"] = nn.DataParallel(self.models["depth"])

self.parameters_to_train += list(self.models["depth"].parameters())

for key, ipt in inputs.items():

inputs[key] = ipt.to(self.device)

features = self.models["encoder"](inputs["color_aug", 0, 0])

for fea in features:

print('fea:', fea.device)

print('enoder:', self.models["encoder"].device_ids)

print('depth', self.models["depth"].device_ids)

outputs = self.models["depth"](features)

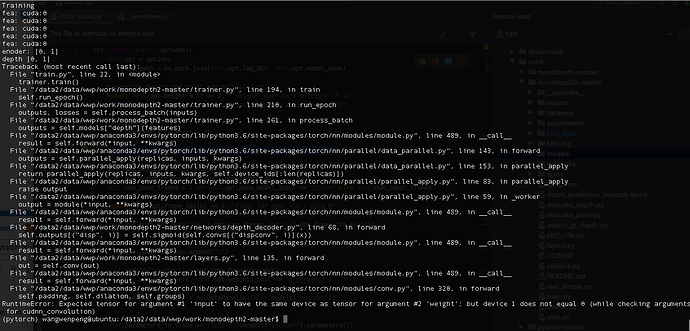

then run it gives error like this:

It seems the input data location not match to weight location, and i also notice that eocoder and depth all existence in card 0 and 1, just data in cuda 0, so it shouldn’t report bug, because, the data can match to any model in cuda 0, Is not it?

How fix it? anyone help me?

@ptrblck