Hi, when i wanna try the demo proposed by goldsborough. I strike an error and hope someone could solve this. Thank you very much.

Enviroment:

gcc: 4.8.5

g++: 4.8.5

cuda: 9.0

system: ubuntu18.04

libtorch: https://download.pytorch.org/libtorch/nightly/cu90/libtorch-shared-with-deps-latest.zip

cmake: 3.13

Code: mnist_example.cpp

#include <torch/torch.h>

// Define a new Module.

struct Net : torch::nn::Module {

Net() {

// Construct and register two Linear submodules.

fc1 = register_module("fc1", torch::nn::Linear(8, 64));

fc2 = register_module("fc2", torch::nn::Linear(64, 1));

}

// Implement the Net's algorithm.

torch::Tensor forward(torch::Tensor x) {

// Use one of many tensor manipulation functions.

x = torch::relu(fc1->forward(x));

// bug fixed 1

x = torch::dropout(x, 0.5, true);

x = torch::sigmoid(fc2->forward(x));

return x;

}

// Use one of many "standard library" modules.

torch::nn::Linear fc1{nullptr}, fc2{nullptr};

};

// Create a new Net.

Net net;

// Create a multi-threaded data loader for the MNIST dataset.

auto data_loader =

torch::data::data_loader(torch::data::datasets::MNIST("./data"));

// Instantiate an SGD optimization algorithm to update our Net's parameters.

torch::optim::SGD optimizer(net.parameters(), 0.1);

int main() {

for (size_t epoch = 1; epoch <= 20; ++epoch) {

size_t batch_index = 0;

// Iterate the data loader to yield batches from the dataset.

for (auto batch : data_loader) {

// Reset gradients.

optimizer.zero_grad();

// Execute the model on the input data.

auto prediction = model.forward(batch.data);

// Compute a loss value to judge the prediction of our model.

auto loss = torch::binary_cross_entropy(prediction, batch.label);

// Compute gradients of the loss w.r.t. the parameters of our model.

loss.backward();

// Update the parameters based on the calculated gradients.

optimizer.step();

if (batch_index++ % 10 == 0) {

std::cout << "Epoch: " << epoch << " | Batch: " << batch_index

<< " | Loss: " << loss << std::endl;

// Serialize your model periodically as a checkpoint.

torch::save(net, "net.pt");

}

}

}

CMakeLists.txt

cmake_minimum_required(VERSION 3.0 FATAL_ERROR)

project(mnist_example)

find_package(Torch REQUIRED)

option(DOWNLOAD_MNIST "Download the MNIST dataset from the internet" ON)

if (DOWNLOAD_MNIST)

message(STATUS "Downloading MNIST dataset")

execute_process(

COMMAND python ${CMAKE_CURRENT_LIST_DIR}/download_mnist.py

-d ${CMAKE_BINARY_DIR}/data

ERROR_VARIABLE DOWNLOAD_ERROR)

if (DOWNLOAD_ERROR)

message(FATAL_ERROR "Error downloading MNIST dataset: ${DOWNLOAD_ERROR}")

endif()

endif()

add_executable(mnist_example mnist_example.cpp)

target_compile_features(mnist_example PUBLIC cxx_range_for)

target_link_libraries(mnist_example "${TORCH_LIBRARIES}")

set_property(TARGET mnist_example PROPERTY CXX_STANDARD 11)

download_mnist.py

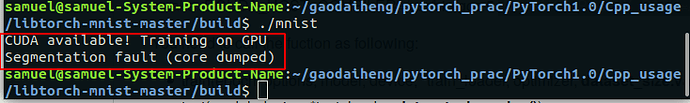

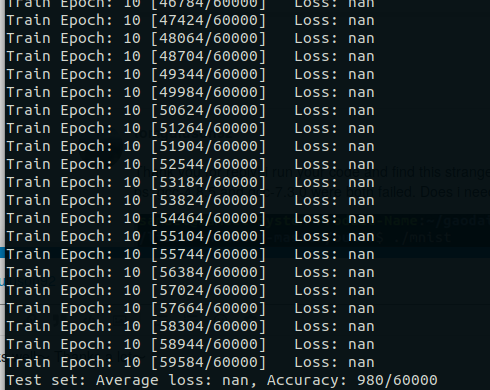

The error is

-- Configuring done

-- Generating done

-- Build files have been written to: /home/samuel/gaodaiheng/pytorch_prac/PyTorch1.0/Cpp_usage/end2end_example/build

Scanning dependencies of target mnist_example

[ 50%] Building CXX object CMakeFiles/mnist_example.dir/mnist_mlp.cpp.o

/home/samuel/gaodaiheng/pytorch_prac/PyTorch1.0/Cpp_usage/end2end_example/mnist_mlp.cpp:30:3: error: ‘data_loader’ is not a member of ‘torch::data’

torch::data::data_loader(torch::data::datasets::MNIST("./data"));

^

/home/samuel/gaodaiheng/pytorch_prac/PyTorch1.0/Cpp_usage/end2end_example/mnist_mlp.cpp:30:3: note: suggested alternative:

/home/samuel/gaodaiheng/pytorch_prac/PyTorch1.0/Cpp_usage/end2end_example/mnist_mlp.cpp:29:6: note: ‘data_loader’

auto data_loader =

^

/home/samuel/gaodaiheng/pytorch_prac/PyTorch1.0/Cpp_usage/end2end_example/mnist_mlp.cpp: In function ‘int main()’:

/home/samuel/gaodaiheng/pytorch_prac/PyTorch1.0/Cpp_usage/end2end_example/mnist_mlp.cpp:38:24: error: unable to deduce ‘auto&&’ from ‘data_loader’

for (auto batch : data_loader) {

^

/home/samuel/gaodaiheng/pytorch_prac/PyTorch1.0/Cpp_usage/end2end_example/mnist_mlp.cpp:42:26: error: ‘model’ was not declared in this scope

auto prediction = model.forward(batch.data);

^

/home/samuel/gaodaiheng/pytorch_prac/PyTorch1.0/Cpp_usage/end2end_example/mnist_mlp.cpp:57:2: error: expected ‘}’ at end of input

}

^

In file included from /home/samuel/gaodaiheng/pytorch_prac/PyTorch1.0/Cpp_usage/end2end_example/libtorch_cpu/include/torch/csrc/api/include/torch/all.h:8:0,

from /home/samuel/gaodaiheng/pytorch_prac/PyTorch1.0/Cpp_usage/end2end_example/libtorch_cpu/include/torch/csrc/api/include/torch/torch.h:3,

from /home/samuel/gaodaiheng/pytorch_prac/PyTorch1.0/Cpp_usage/end2end_example/mnist_mlp.cpp:1:

/home/samuel/gaodaiheng/pytorch_prac/PyTorch1.0/Cpp_usage/end2end_example/libtorch_cpu/include/torch/csrc/api/include/torch/serialize.h: In instantiation of ‘void torch::save(const Value&, SaveToArgs&& ...) [with Value = Net; SaveToArgs = {const char (&)[7]}]’:

/home/samuel/gaodaiheng/pytorch_prac/PyTorch1.0/Cpp_usage/end2end_example/mnist_mlp.cpp:54:35: required from here

/home/samuel/gaodaiheng/pytorch_prac/PyTorch1.0/Cpp_usage/end2end_example/libtorch_cpu/include/torch/csrc/api/include/torch/serialize.h:39:11: error: no match for ‘operator<<’ (operand types are ‘torch::serialize::OutputArchive’ and ‘const Net’)

archive << value;

...