I’m new to PyTorch and I’m wondering what is the most efficient way to load data (from a folder of .jpgs or downsampling an mp4) and running batch inference using a trained Detectron2 model, to minimize the bottleneck from loading the data.

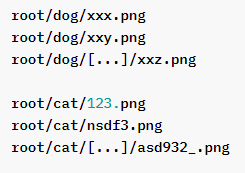

If your data is subdivided in folders like this:

You might want to look into ImageFolder for images or DatasetFolder for more generic stuff. In there you can define which files you want to load and your dataset will automatically have a label (0 to n, where n is the number of classes).

Your can also follow this tutorial to see how you can create your own custom Dataset class

https://pytorch.org/tutorials/beginner/data_loading_tutorial.html#dataset-class