Recently, I deccided to realize a dialogue system. But focus on the formula as followings, I’m confused how to realize it by pytorch.

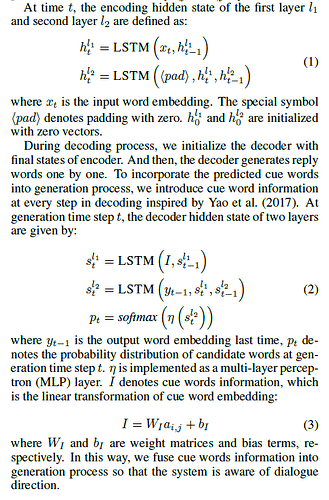

At time t, the encoding hidden state of the first layer l1

and second layer l2 are defined as:

$h_{t}^{l_1} = LSTM(x_t,h_{t-1}^{l_{1}})$

$h_{t}^{l_2} = LSTM(<pad>,h_{t}^{l_1},h_{t-1}^{l_{2}})$

where $x_t$ is the input word embedding. The special symbol<pad> denotes padding with zero. $h_{0}^{l_1} $ and $h_{0}^{l_2} $ are initialized with zero vectors.

so how to deal with it when LSTM need multi inputs? I think it needs LSTMCell because of the differences between layer 1 and layer 2. But LSTMCell’s input are input,h,c. How to realize it as the representation of formula (2) and (3) Or shoud I concatenate $y_{t-1}$ and $s_{t-1}^{l_2}$ as input?