Hi i am trying to use pretrained resnet 18 and gru for automatic lipreaing

here is my model

class CNN3D(nn.Module): #classes_num

def init(self, t_dim=29, img_x=88, img_y=88, drop_p=0.2, fc_hidden1=256, fc_hidden2=128, num_classes=5):

super(CNN3D, self).init()

resnet = models.resnet18(pretrained=True)

resnet.conv1 = nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3,bias=False)

modules = list(resnet.children())[:-1] # delete the last fc layer.

self.resnet = nn.Sequential(*modules)

self.gru = nn.GRU(input_size=512, hidden_size=1024, num_layers=3, batch_first=True, bidirectional=True, dropout=0.2)

self.fc = nn.Linear(1024*2, num_classes)

self.dropout = nn.Dropout(0.4)

def forward(self, x):

#print(x.shape) #[70, 1, 29, 88, 88]

x = x.transpose(1, 2)

x = x.contiguous()

x = x.view(-1, 1, x.size(3), x.size(4))

#print(x.shape) #[2030, 1, 88, 88]

x = self.resnet(x)

#print(x.shape) #[2030, 512, 1, 1]

x= x.view(-1, 29, 512)

#print(x.shape) #[70, 29, 512]

x,_ = self.gru(x)

#print(x.shape) #[70, 29, 2048]

x = self.dropout(x).mean(1)

#print(x.shape) #[70, 2048]

x= self.fc(x)

#print(x.shape) #[70, 2]

return x

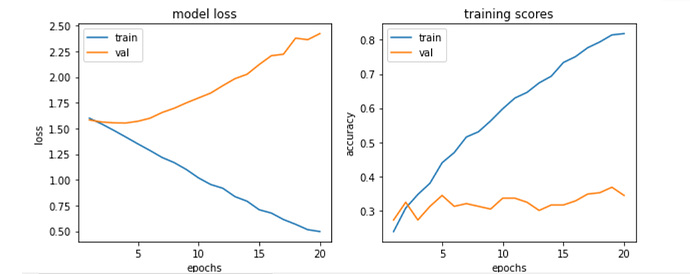

and the results i got were

can someone help me to determine what is causing this?