Hello,

I’ve been working on a RL setting to control a multi joint robotic arm. It is a simple setup, with a n-joints robot and a target to be reached.

I’ve made various implementations, namely PG and DQN but so far, I’ve been met with cruel failure.

I have troubles figuring out the root of the problem because:

- The same implementation seems to work with gym environements (cartpole especially)

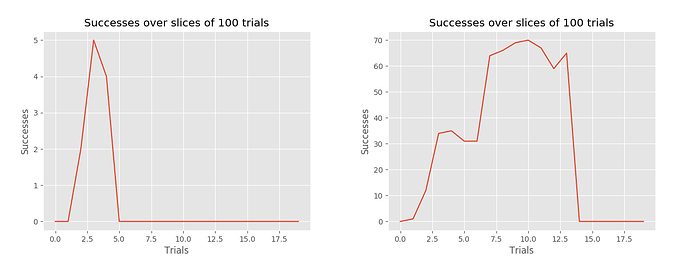

- I have very strange success rate shape, even with long training

- I have also tried various reward functions, some more guiding than other but to no avail

On the figures, the x-axis values should be multiplied by x100 to obtain the real value

I’d be very grateful if somebody could help me figure out the problem. The following link to my repo: GitHub - MoMe36/Old-RL has the guilty files and some explanations.

Thanks a lot !