After some more digging I think I found the problem.

What I didn’t know was this:

If any operations are applied to two tensors, where at least one of them has requires_grad=True, the resulting tensor will also have requires_grad=True .

And this is what happens here. It happen that the returned tensor is a product of tensors without requires_grad=True.

That’s the problem right?

Here is my detailed Explanation:

Here we set requires_grad=True, that’s fine.

Code A:

...

from torch.nn import Parameter

...

dtype = torch.DoubleTensor

...

class RobotTrustModel(torch.nn.Module):

def __init__(self):

super(RobotTrustModel, self).__init__()

self.pre_beta_1 = Parameter(dtype(4.0 * np.ones(1)), requires_grad=True)

self.pre_beta_2 = Parameter(dtype(4.0 * np.ones(1)), requires_grad=True)

self.pre_l_1 = Parameter(dtype(-10.0 * np.ones(1)), requires_grad=True)

self.pre_u_1 = Parameter(dtype( 10.0 * np.ones(1)), requires_grad=True)

self.pre_l_2 = Parameter(dtype(-10.0 * np.ones(1)), requires_grad=True)

self.pre_u_2 = Parameter(dtype( 10.0 * np.ones(1)), requires_grad=True)

...

Coming from here:

...

def closure():

diff = model(bin_c, obs_probs_idxs) - obs_probs_vect

loss = torch.mean( torch.pow(diff, 2.0) )

optimizer.zero_grad()

loss.backward()

return loss

optimizer.step(closure)

...

the function “forward” which contains this among other things is called:

Code B:

def forward(self, bin_centers, obs_probs_idxs):

...

for i in range(n_diffs): #loop over the number of the [row,col] indexes (max is nbins x nbins (625))

# get the index for the row and column

bin_center_idx_1 = obs_probs_idxs[i, 0] #get the ith cell row

bin_center_idx_2 = obs_probs_idxs[i, 1] #get the ith cell col

# pass l, u, beta & the bin to compute_trust for 1 & 2

trust[i] = self.compute_trust(l_1, u_1, beta_1, bin_centers[bin_center_idx_1]) * self.compute_trust(l_2, u_2, beta_2, bin_centers[bin_center_idx_2])

...

return trust

In order to get tensor trust[i] the function “compute_trust” has to be called twice in each iteration of the loop.

l_1, u_1 & beta_1 and those for _2 all have requires_grad=True because they are all a result of operations of tensors (defined in Code A) where requires_grad=True.

Function “compute_trust”:

Code C:

def compute_trust(self, l, u, b, p):

trust = 1.0 - 1.0 / (b * (u - l)) * torch.log( (1.0 + torch.exp(b * (p - l))) / (1.0 + torch.exp(b * (p - u))) )

else:

if p <= l:

trust = torch.tensor([1.0], requires_grad=True) #assign a trust of 1

elif p > u:

trust = torch.tensor([0.0], requires_grad=True) #assign a trust of 0

else:

trust = (u - p) / (u - l + 0.0001)

return trust

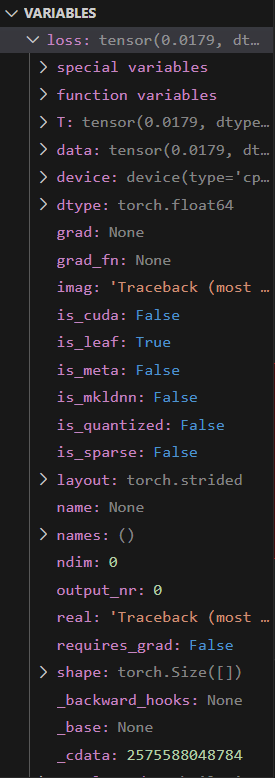

Here is the problem. trust = torch.tensor([1.0] and trust = torch.tensor([0.0] don’t have requires_grad=True set.

In the case that both function calls of “compute_trust” within the loop in Code B always get either trust = torch.tensor([1.0] or trust = torch.tensor([0.0] as a return the returned trust in Code B also won’t have a required_trust= True which results in an error.

Changing trust = torch.tensor([1.0] and trust = torch.tensor([0.0] in Code C to this and the code works:

if p <= l:

trust = torch.tensor([1.0] , requires_grad=True)

elif p > u:

trust = torch.tensor([0.0] , requires_grad=True)

.

.

.

.

.

The complete relevant code:

...

def closure():

diff = model(bin_c, obs_probs_idxs) - obs_probs_vect

loss = torch.mean( torch.pow(diff, 2.0) )

optimizer.zero_grad()

loss.backward()

return loss

optimizer.step(closure)

...

class RobotTrustModel(torch.nn.Module):

def __init__(self):

super(RobotTrustModel, self).__init__()

#beta is not relevant for artificial trust model (see BTM paper for natural trust)

self.pre_beta_1 = Parameter(dtype(4.0 * np.ones(1)), requires_grad=True)

self.pre_beta_2 = Parameter(dtype(4.0 * np.ones(1)), requires_grad=True)

#instead of using lower bounds of 0 and upper bounds of 1, the code is more

#stable when using the range [-10,10] and then converting back later to [0,1].

#requires_grad=True means it is part of the gradient computation (i.e., the value should be updated when being optimized)

self.pre_l_1 = Parameter(dtype(-10.0 * np.ones(1)), requires_grad=True)

self.pre_u_1 = Parameter(dtype( 10.0 * np.ones(1)), requires_grad=True)

self.pre_l_2 = Parameter(dtype(-10.0 * np.ones(1)), requires_grad=True)

self.pre_u_2 = Parameter(dtype( 10.0 * np.ones(1)), requires_grad=True)

def forward(self, bin_centers, obs_probs_idxs):

# the bins and hols [row,col] indexes which are not nan

#bin_centers and obs_probs_idxs are passed in

n_diffs = obs_probs_idxs.shape[0] #the number of [row,col] indexes (max is nbins x nbins (625))

trust = torch.zeros(n_diffs) #create a 1xn_diffs array of 0s

if(self.pre_l_1 > self.pre_u_1): #if the lower bound is greater than the upper bound

buf = self.pre_l_1 #switch the l_1 and u_1 values

self.pre_l_1 = self.pre_u_1

self.pre_u_1 = buf

if(self.pre_l_2 > self.pre_u_2): #if the lower bound is greater than the upper bound

buf = self.pre_l_2 #switch the l_2 and u_2 values

self.pre_l_2 = self.pre_u_2

self.pre_u_2 = buf

l_1 = self.sigm(self.pre_l_1) #convert to [0,1] range

u_1 = self.sigm(self.pre_u_1)

beta_1 = self.pre_beta_1 * self.pre_beta_1 #want beta to be positive to compute trust using the artificial trust model

l_2 = self.sigm(self.pre_l_2)

u_2 = self.sigm(self.pre_u_2)

beta_2 = self.pre_beta_2 * self.pre_beta_2

for i in range(n_diffs): #loop over the number of the [row,col] indexes (max is nbins x nbins (625))

# get the index for the row and column

bin_center_idx_1 = obs_probs_idxs[i, 0] #get the ith cell row

bin_center_idx_2 = obs_probs_idxs[i, 1] #get the ith cell col

# pass l, u, beta & the bin to compute_trust for 1 & 2

trust[i] = self.compute_trust(l_1, u_1, beta_1, bin_centers[bin_center_idx_1]) * self.compute_trust(l_2, u_2, beta_2, bin_centers[bin_center_idx_2])

#computing the trust estimate for each cell based on the current lower and upper bounds (basically the 3d trust plot)

if usecuda:

trust = trust.cuda()

return trust

def compute_trust(self, l, u, b, p):

#passing in lower bound capability belief, upper bound capability belief, beta, task requirement lambdabar

# p is the value from one bin

if b < -50: #this is for natural trust. This never happens for the artificial trust model.

trust = 1.0 - 1.0 / (b * (u - l)) * torch.log( (1.0 + torch.exp(b * (p - l))) / (1.0 + torch.exp(b * (p - u))) )

print(f"b {trust}")

else: #as long as you pass in a positive beta, we will be calculating artificial trust which doesnt depend on beta

if p <= l: #if lambdabar is less than the lower bound capability belief

#trust = torch.tensor([1.0]) # OLD

trust = torch.tensor([1.0], requires_grad=True) #assign a trust of 1

elif p > u: #if lambdabar is greater than the upper bound capability belief

#trust = torch.tensor([0.0]) # OLD

trust = torch.tensor([0.0], requires_grad=True) #assign a trust of 0

else:

trust = (u - p) / (u - l + 0.0001) #assign trust as a constant slope between u and l. 0.0001 is to not divide by 0.

if usecuda:

trust = trust.cuda()

return trust #returns the trust in human agent given lower bound l, upper bound u, beta term b, and task requirement lambdabar p

def sigm(self, x): #sigmoid function to convert [-10,10] (really [-inf,inf]) to [0,1]

return 1 / (1 + torch.exp(-x))

def sigmoid(self, lambdabar, agent_c):

#takes in task requirement for one dimension and the agent's actual capability for that dimension

#calculates true trust to determine the stochastic task outcome

#if lambdabar == agent_c, the sigmoid output is 0.5

eta = 1/50.0 #is a good value through testing for good capability updating

#eta = 1/5.0

#eta = 1/500.0

return 1 / (1 + math.exp((lambdabar - agent_c)/eta))