Hi, I like to selectively quantize layers as some layers in my project just serve as a regularizer. So, I tried a few ways and got confused with the following results.

class LeNet(nn.Module):

def __init__(self):

super().__init__()

self.l1 = nn.Linear(28 * 28, 10)

self.relu1 = nn.ReLU(inplace=True)

def forward(self, x):

return self.relu1(self.l1(x.view(x.size(0), -1)))

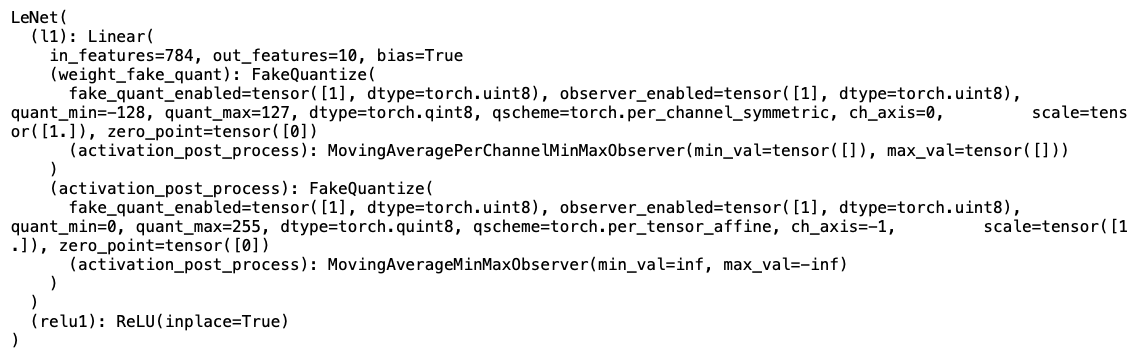

1. selective qconfig assignment and top level transform

model = LeNet()

model.l1.qconfig = torch.quantization.get_default_qat_qconfig()

torch.quantization.prepare_qat(model, inplace=True)

print(model)

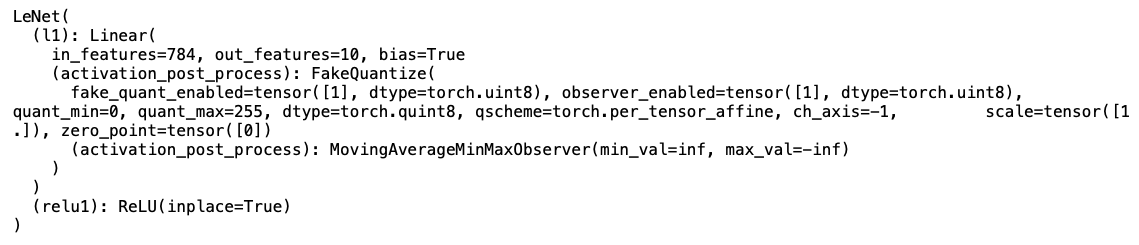

2. selective qconfig assignment and selective transform

model2 = LeNet()

model2.l1.qconfig = torch.quantization.get_default_qat_qconfig()

torch.quantization.prepare_qat(model2.l1, inplace=True)

print(model2)

You can see that the 2nd case doesn’t have (weight_fake_quant): FakeQuantize. Is this a correct behavior? Shouldn’t both yield the same transformed model?

Also, if there is a better way to do selective quantization (like different bits, quant vs no quant), please advise.