I am using LSTM with data parallel. What happens it first gives a warning

RuntimeWarning: RNN module weights are not part of single contiguous chunk of memory. This means they need to be compacted at every call, possibly greatly increasing memory usage. To compact weights again call flatten_parameters().

self.num_layers, self.dropout, self.training, self.bidirectional)

The according this post, I added the following in the forward function before calling the LSTM.

self.lstm.flatten_parameters()

But this time I got this error,

set_storage is not allowed on Tensor created from .data or .detach()

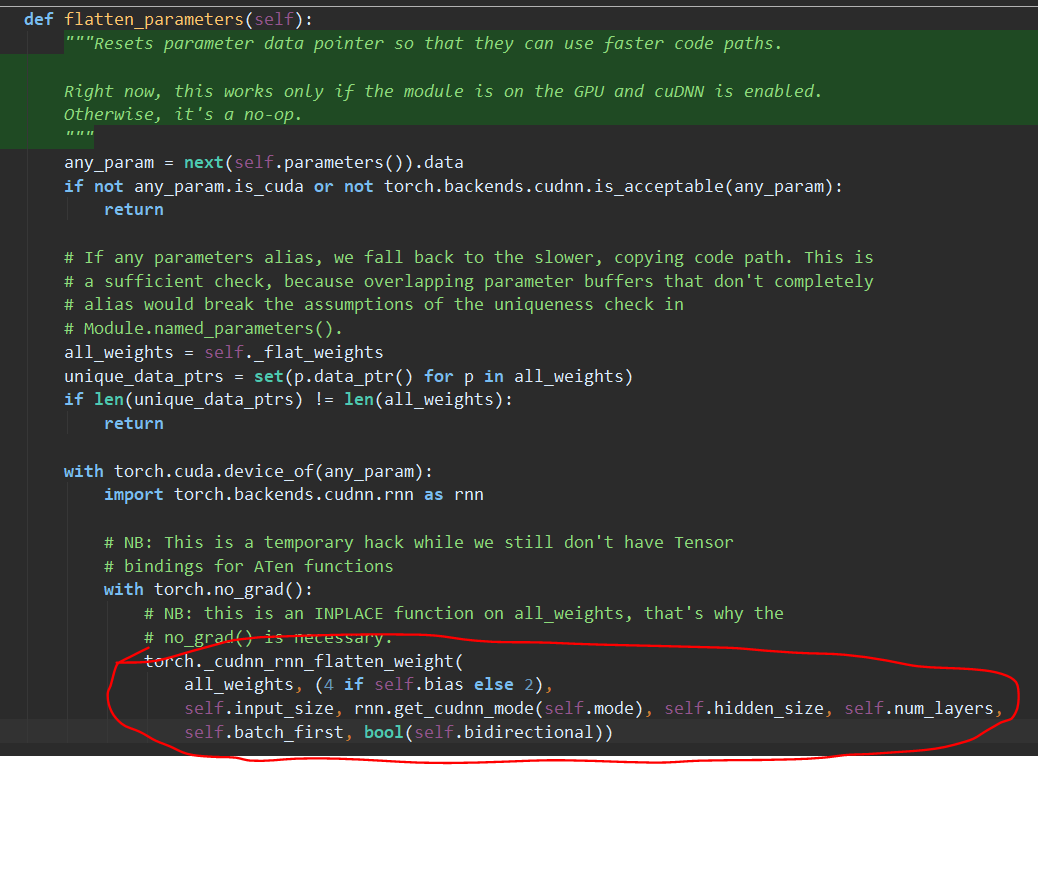

The error occurs at the last line of the flatten_parameters function in the PyTorch source code,