I’m having a hard time understanding the slow import of torch in later versions, including the most recent 2.0 release. I strongly believe this might be a cache issue, but I can’t put the nail in the coffin. I also ruled out is only on my end, my buddy’s macos is facing a similar issue. We’re also having different distributions, me Ventura, and he Big Sur.

Did anybody else face a similar issue? My current import time is taking on an average of 3.5s.

Thanks

Digging deeper, I ran a profiler on torch’s __init__.py and got the following statistics:

ncalls tottime percall cumtime percall filename:lineno(function)

1287 1.824 0.001 1.824 0.001 {built-in method io.open_code} <--------------

45/25 1.045 0.023 1.579 0.063 {built-in method _imp.create_dynamic} <--------------

1287 0.136 0.000 0.136 0.000 {built-in method marshal.loads}

5408 0.064 0.000 0.064 0.000 {built-in method posix.stat}

2841/2785 0.050 0.000 0.240 0.000 {built-in method builtins.__build_class__}

424 0.047 0.000 0.080 0.000 assumptions.py:596(__init__)

1635/1 0.042 0.000 4.315 4.315 {built-in method builtins.exec}

2 0.040 0.020 0.040 0.020 {built-in method _ctypes.dlopen}

51906 0.026 0.000 0.062 0.000 {built-in method builtins.getattr}

1298 0.026 0.000 0.026 0.000 {method '__exit__' of '_io._IOBase' objects}

164 0.023 0.000 0.023 0.000 {built-in method posix.listdir}

1769 0.020 0.000 0.131 0.000 <frozen importlib._bootstrap_external>:1604(find_spec)

1287 0.019 0.000 0.019 0.000 {method 'read' of '_io.BufferedReader' objects}

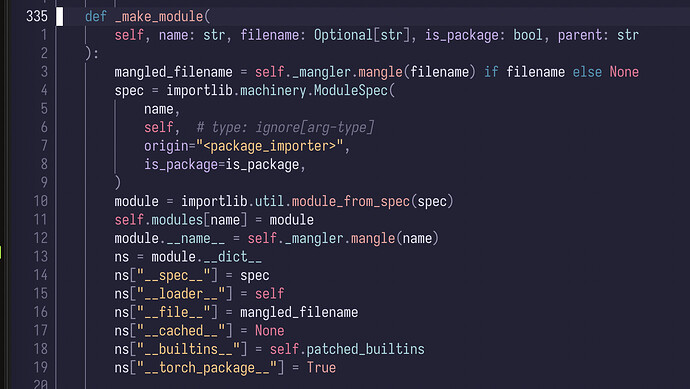

The _imp.create_dynamic is part of importlib.machinery.ModuleSpec and is called once inside package_importer.py here:

I’m really confused.