Hello,

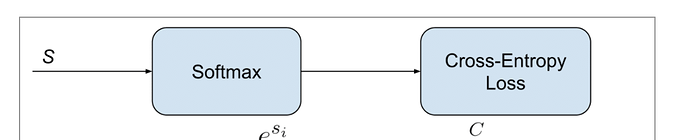

My network has Softmax activation plus a Cross-Entropy loss, which some refer to Categorical Cross-Entropy loss. See:

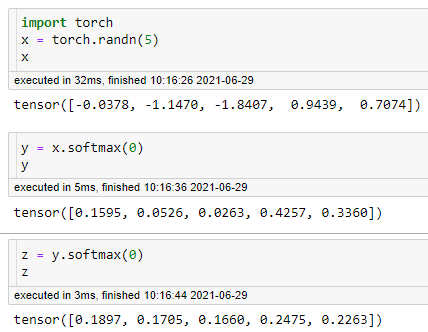

In binary classification, do I need one-hot encoding to work in a network like this in PyTorch? I am using Integer Encoding. Just as matter of fact, here are some outputs WITHOUT Softmax activation (batch = 4):

outputs: tensor([[ 0.2439, 0.0890],

[ 0.2258, 0.1119],

[-0.2149, 0.2282],

[ 0.0222, -0.1259]]

And here are some outputs WITH Softmax (Softmax activation before Cross-Entropy):

outputs: tensor([[0.3662, 0.6338],

[0.4209, 0.5791],

[0.4611, 0.5389],

[0.5497, 0.4503]]

As expected, the elements of the n-dimensional output Tensor lie in the range [0,1] and sum to 1. Honestly, I see no remarkable in the loss functions in both situations.

But, the whole point is to know whether I can use integer encoding with Softmax + Cross-Entropy in PyTorch.

Thanks.