Hello,

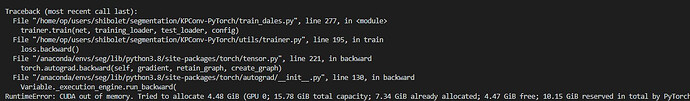

I’m currently working on a semantic segmentation task on point clouds, and I’ve bumped onto a memory problem. I have about 30 samples of point clouds that I want to train on, and it crashes with:

Even when I start training just on one sample.

So I’ve figured I need to split the data onto tiles (with overlap) in the CPU and send them one by one to the GPU. I’ve seen some examples of doing so with regular images and pretty confused on how to do so with point clouds.

Are there any elegant methods of doing so in the Data Loader?

Thanks!