Hi,

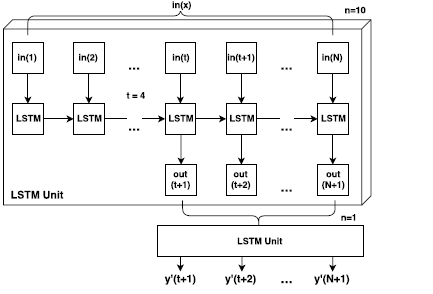

I would like to create LSTM layers which contain different hidden layers to predict time series data, for the 1st layer of LSTM_1 contains 10 hidden layers, LSTM_2 contains 1 hidden layer, the proposed neural network architecture is illustrated following

def __init__(self, nb_features=1, hidden_size_1=100, hidden_size_2=100, nb_layers_1 =10, nb_layers_2 = 1, dropout=0.5): #(self, nb_features=1, hidden_size=100, nb_layers=10, dropout=0.5):

super(Sequence, self).__init__()

self.nb_features = nb_features

self.hidden_size_1 = hidden_size_1

self.hidden_size_2 = hidden_size_2

self.nb_layers_1 =nb_layers_1

self.nb_layers_2 = nb_layers_2

self.lstm_1 = nn.LSTM(self.nb_features, self.hidden_size_1, self.nb_layers_1, dropout=dropout)

self.lstm_2 = nn.LSTM(self.hidden_size_1, self.hidden_size_2, self.nb_layers_2, dropout=dropout)

self.lin = nn.Linear(self.hidden_size_2, 1)

def forward(self, input):

h_t1 = Variable(torch.zeros(self.nb_layers_1, input.size()[1], self.hidden_size_1))

c_t1 = Variable(torch.zeros(self.nb_layers_1, input.size()[1], self.hidden_size_1))

h_t2 = Variable(torch.zeros(self.nb_layers_2, input.size()[1], self.hidden_size_2))

c_t2 = Variable(torch.zeros(self.nb_layers_2, input.size()[1], self.hidden_size_2))

outputs = []

for i, input_t in enumerate(input.chunk(input.size(1))):

h_t1, c_t1 = self.lstm_1(input_t, (h_t1, c_t1))

h_t2, _ = self.lstm_2(h_t1, (h_t2, c_t2))

output = self.lin(h_t2[-1])

outputs += [output]

outputs = torch.stack(outputs, 1).squeeze(2)

return outputs

However, I got runtime error for the above code “RuntimeError: Expected hidden[0] size (3, 60, 100), got (1, 60, 100)”. is it correct for the above code, Could you please give some suggestions? Many thanks