how I transform tensor to an image

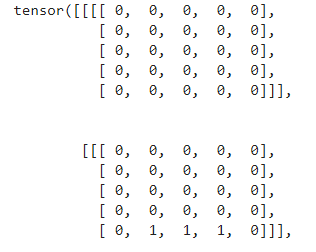

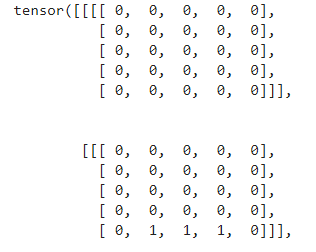

torch.Size([3687, 1, 5, 5])

how I transform tensor to an image

torch.Size([3687, 1, 5, 5])

Your tensor is already a set of images. The size (3687, 1, 5, 5) means the tensor contains a batch of 3687 images of size (5, 5) with 1 channel.

@arman-yekkehkhani yes ,but I want to recover the first and the second in order to concatenate them to result in a final image

You can pick any slice from that tensor, for example:

a = torch.ones((3678, 1, 5, 5))

first = a[0] # size (1, 5, 5)

second = a[1] # size (1, 5, 5)

@arman-yekkehkhani thanks for reply, but I want to display part of the image of size 100 * 100 with concatenation these tensors 3678

Could please explain the problem in more detail. What is that tensor and what do you want to obtain from that?

image1= image.extract_patches_2d(imag[‘taille100’], ((5,5)))

@arman-yekkehkhani I want to get the (i,j) of each patches : I want to retrieve the coordinates of x, y of patches, which is retrieved from the first image (imag)

Assuming you are using sklearn for extracting patches, you can use reconstruct_from_patches_2d to reconstruct your image.

P.S. on the first edition of your response, you used torch.exp mistakenly instead of torch.nn.softmax() for computing softmax.

@arman-yekkehkhani how to get the values of x, y of the patch?

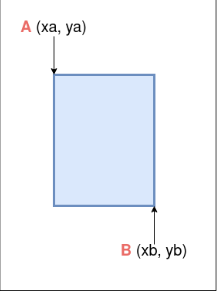

Assume your image is of size h x w . Then the number of total patches with patch size a x b is calculated as [ h - a + 1 ] x [ w - b + 1]. So given the index of a patch (what you have), you can compute the location of the top-left corner of a patch according to the following formula:

x = idx % [h - a + 1]

y = idx // [h - a + 1]

@arman-yekkehkhani the h*w it’s the size of original image? what does mean idx?

yes. And idx is the index of a batch. In your example, there are 3687 patches.

@arman-yekkehkhani

x = idx % [h - a + 1]

y = idx // [h - a + 1]

I want to calculate x, y for each patches of image (xa, ya), but just one patch