I am reading the documentation about jvp. I believe it is used to compute the dot product between the Jacobian and v. However, I find its behavior strange when the inputs are 2-D matrices.

According to its usage: torch.autograd.functional.jvp(func, inputs, v=None), we can define the func and inputs as follows:

def func(x):

return 2*x.sum(dim=1)

x = torch.ones(3,2, requiers_grad=True)

v = torch.tensor([[1,2],[3,4],[5,6])

res = torch.autograd.functional.jvp(func, x, v)

After running the above code:

res[0] = tensor([4,4,4]) # which is the output of func(x)

res[1] = tensor([6, 14, 22]) # which is the dot product between jacobian and v

The result of res[1] is somewhat unintuitive. Let’s define y = func(x), which means y = 2*x.sum(dim=1) :

y[0] = 2 * x[0,0] + 2 * x[0,1]

y[1] = 2 * x[1,0] + 2 * x[1,1]

y[2] = 2 * x[2,0] + 2 * x[2,1]

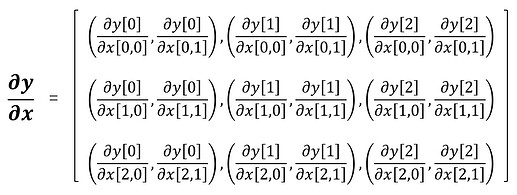

The Jacobian matrix of y with respect to x is:

grad = torch.tenosr([(2,2),(0,0),(0,0),

(0,0),(2,2),(0,0),

(0,0),(0,0),(2,2)])

grad.shape = torch.Size([3,3,2])

v = torch.tensor([[1,2],

[3,4],

[5,6]])

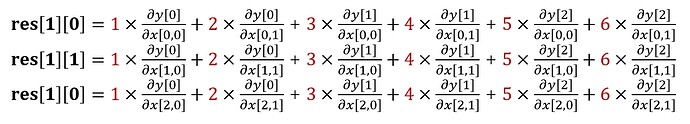

According to res[1] = tensor([6, 14, 22]), we can infer that the dot product is performed in the following way:

Such an operation is not the standard dot product between two matrices. Additionally, the output of JVP only has the shape of a torch.Size([3]), and the inputs of JVP have the shape of a torch.Size([3, 3]). So I wonder what the meaning of the outputs of JVP is? What does the grad of res[1] stand for, and where can we use it?