Hey, I have a time series prediction problem.

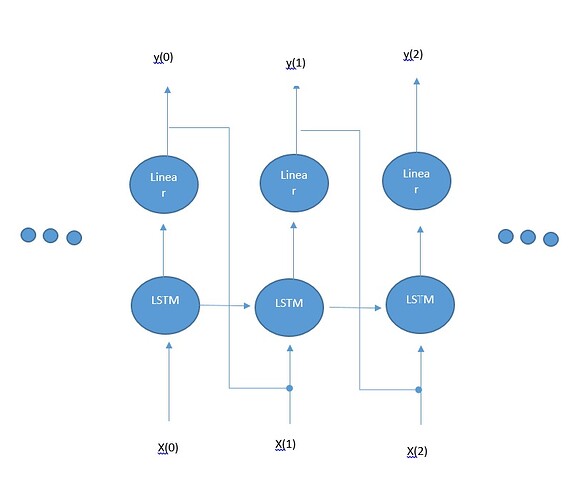

I would like to have a model which uses LSTM followed by a linear regression layer and to use the previous time step output from the linear regression layer as an additional feature for the LSTM input in the next time step.

I have added a picture to clarify

Is there a simple way to implement this in Pytorch?

I’m having trouble because the input to the LSTM is a sequence of say x(0),x(1),…x(200) and the LSTM simply outputs the cell state and output/hidden state for the sequence at one shot, so I can’t incorporate the predictions of the additional linear layer

Or maybe a more suitable way for doing this is to have a linear transformation of previous output: Vy(t)+b and add this to the hidden state of the LSTM?

Any help please?