torch.cuda.current_device() always return 0

How can I print real using device?

The used device is the one returned by this function.

What makes you think it uses another one? How do you change the device to use?

@albanD

I have 4 GPUs. and I allocate one training process to each one of the GPUs.

So I use os.environ['CUDA_VISIBLE_DEVICES']

and I want to print the current running GPU number.

CUDA_VISIBLE_DEVICES is the mask used by CUDA to determine what devices it exposes to the user program, which is pytorch in this case. There is no way pytorch can know about that reliably.

Hi,

Within you application, gpu numbers will always start at 0 and grow up from there.

When you use CUDA_VISIBLE_DEVICES, you hide some devices so they won’t be numbered.

If you have 4 gpus: 0, 1, 2, 3.

And run CUDA_VISIBLE_DEVICES=1,2 python some_code.py. Then the device that you will see within python are device 0, 1. Using device 0 in your code will use device 1 from global numering. Using device 1 in your code will use 2 outside.

So in your case if you always set CUDA_VISIBLE_DEVICES to a single device, in your code, the device id will always be 0, that is expected. Unfortunately, there is no way to know what is the global numbering.

Hi,

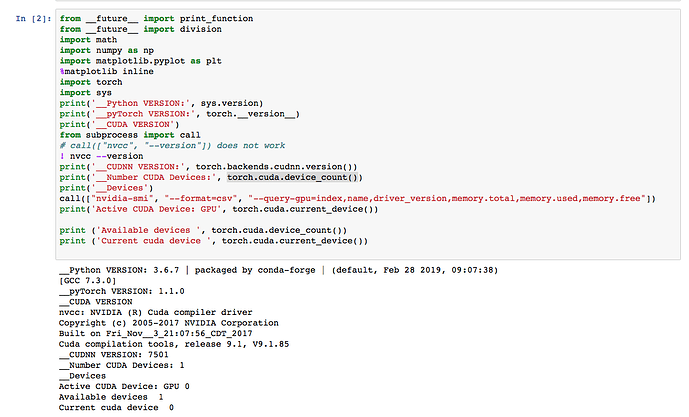

I also encounter same problems. Could you help me?

I have 8 cards but pytorch only detect the first one card.

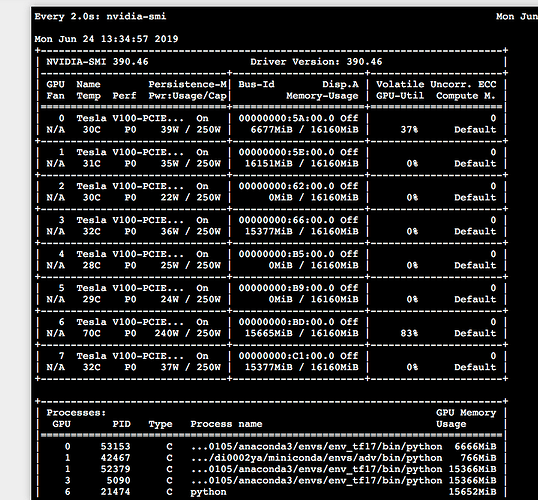

Do you see all 8 devices in the output for nivida-smi?

Is CUDA_VISIBLE_DEVICES set?

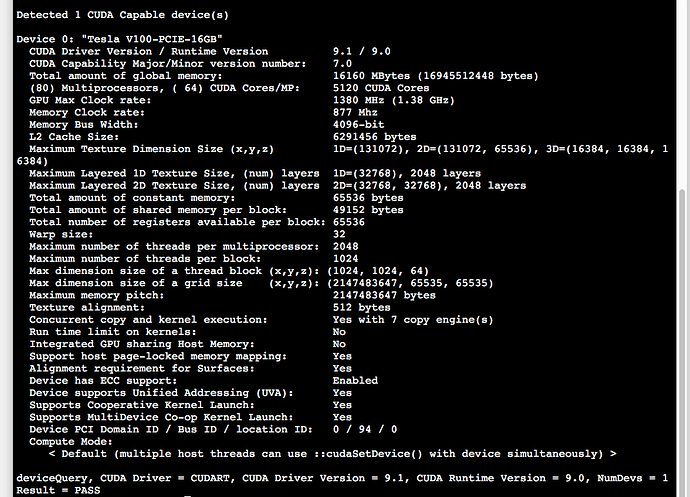

Yes. I see all the 8 devices.

CUDA_VISIBLE_DEVICES is not set?

In the .bashrc? Not set

just echo $CUDA_VISIBLE_DEVICES and verify.

Hi Deepali,

I tried it but it still failed. I also run deviceQuery. It only detect one devices. Is it possible due to driver version?

Yes, I was just about to suggest you to run deviceQuery and check. So looks like issue on the system.

Shall I contact my admin directly?

Yes, that will be helpful.

Try to use os.environ[‘CUDA_VISIBLE_DEVICES’] before u import torch