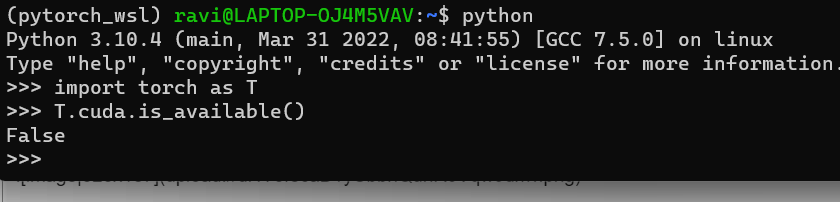

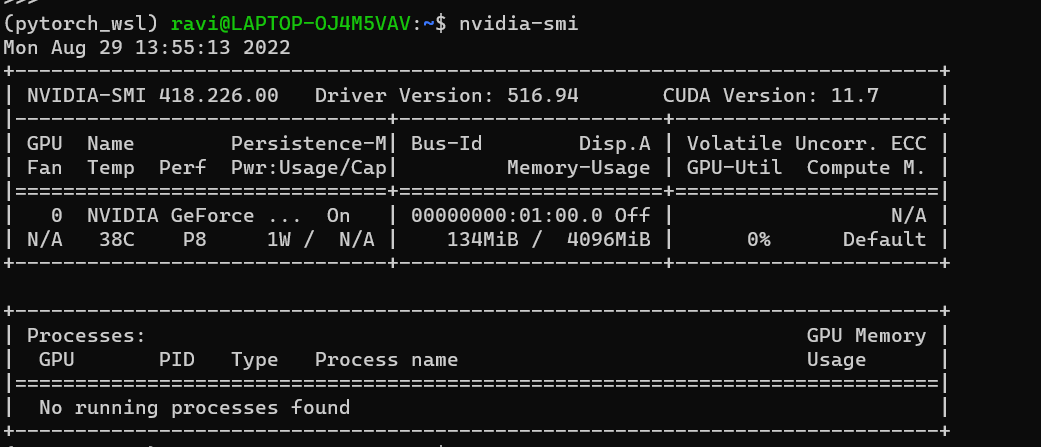

I’ve been trying to use GPU (notebook version of GTX 1650 Ti in Lenovo ideapad gaming 3 81Y4015PCK) in pytorch, but when I check for CUDA support by torch.cuda.is_available(), it returns False. I’m running under windows 10 with latest nvidia driver (installed a week ago), nvidia-smi reports driver version 471.41, cuda version 11.4. Pytorch is installed using pip, as shown on pytorch’s website

pip install torch==1.9.0+cu111 torchvision==0.10.0+cu111 torchaudio===0.9.0 -f https://download.pytorch.org/whl/torch_stable.html

The weird thing is that I also tried to use Anaconda, that didn’t work either. I tried installing different versions of pytorch and cuda (10.2 and 11.1), I tried booting live images of Ubuntu, Pop OS (with preinstalled NVIDIA driver) and some other linux distros, and installing torch there, again with no success. I would’ve thought the GPU is dead, but all my games are running perfectly using that same GPU. Any clue, what might be the problem here?

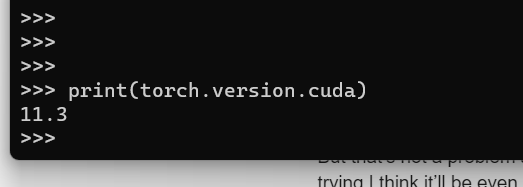

Could you check the reported CUDA verison via print(torch.version.cuda) and make sure the specified binary was indeed installed?

If that’s the case, I would recommend to check your notebook for some power saving options, which could disable the GPU, and force it to run in a “performance” mode.

torch.version.cuda really returns ‘11.1’, nothing weird there. Thanks for the power saving mode idea, that didn’t really come to my mind. I looked into BIOS, but I didn’t find anyting related to GPU at all. The only option was something about thermal mode, I switched it to power, but nothing changed. After all of this, I think pytorch is simply unable to work with this specific model of gpu or hardware combination. But that’s not a problem anymore, few days ago I found out google colab is a thing, and after some trying I think it’ll be even better than using my laptop, since their GPUs are much more powerful devices (16GB of VRAM compared to 4GB on my device). But thank you for your help.

I have dont what you suggested