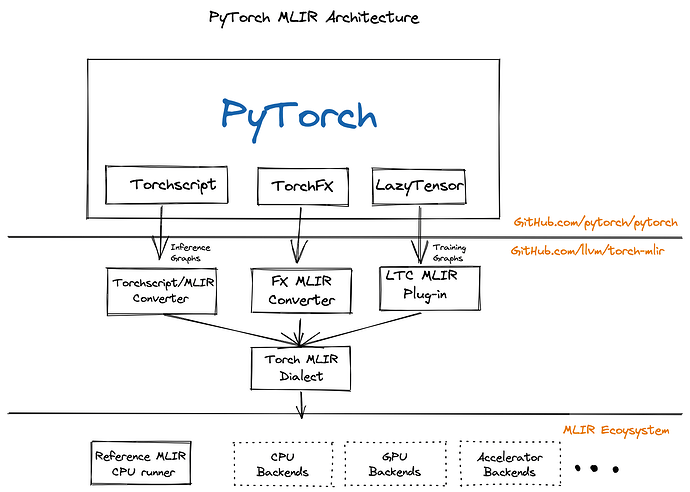

The Torch-MLIR project aims to provide first class compiler support from the PyTorch ecosystem to the MLIR ecosystem.

MLIR The MLIR project is a novel approach to building reusable and extensible compiler infrastructure. MLIR aims to address software fragmentation, improve compilation for heterogeneous hardware, significantly reduce the cost of building domain specific compilers, and aid in connecting existing compilers together.

Torch-MLIR Multiple Vendors use MLIR as the middle layer mapping from platform frameworks like PyTorch, JAX, TensorFlow onto MLIR and then progressively lowering down to their target hardware. We have seen half a dozen custom lowerings from PyTorch to MLIR. Having canonical lowerings from the PyTorch ecosystem to the MLIR ecosystem would provide much needed relief to hardware vendors to focus on their unique value rather than implementing another PyTorch frontend for MLIR. It would be similar to current hardware vendors adding LLVM target support instead of each one also implementing the Clang/C++ frontend.

All the roads from PyTorch to Torch MLIR Dialect

We have a few paths to lower down to the Torch MLIR Dialect.

- TorchScript

This is the most tested path from PyTorch to Torch MLIR Dialect. - TorchFX

This provides a path to go from TorchFX down to MLIR. This a functional prototype. - Lazy Tensor Core (Based on lazy_tensor_staging branch)

This path provides the upcoming LTC path of capture. It is based on an unstable devel branch but is the closest way for you to adapt any existing torch_xla derivatives. - “ACAP” (Deprecated torch_xla based capture, mentioned here for completeness)

The torch-mlir project includes a few examples of lowering down via each path from PyTorch to MLIR and using the “mlir-cpu-runner” to target a CPU backend. Obviously this is just a starting point and you can import this project into your larger MLIR projects to continue lowering to target GPUs and other Accelerators.

We are looking for specific feedback on two fronts:

Vendors: If you use your custom lowerings to get to MLIR eventually, is there anything else you would like to see. Please open github issues in torch-mlir.

Pytorch Dev community:

Here is a PR to integrate Torch-MLIR with an optional build flag into PyTorch. There are included samples to export a ResNet Model and we plan to add BERT and MASK-RCNN examples next. Please let us know what you think.

Also, any feedback on the various paths down to MLIR (TS, FX, LTC->TS, LTC->MLIR, etc) would be great.

Anush

(For the torch-mlir team, nod.ai team and the others who contributed to this effort.)