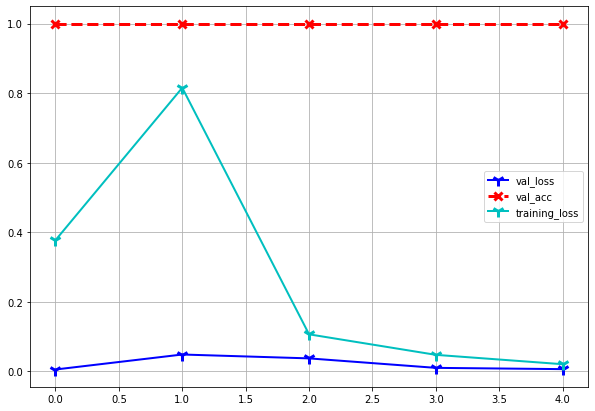

Every time i train ,my training loss just spikes during the 2nd epoch and drops hard,

i want to know why is that, is it a bad thing?

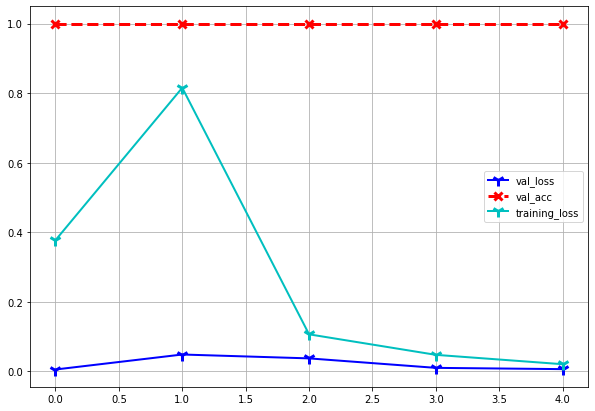

Every time i train ,my training loss just spikes during the 2nd epoch and drops hard,

i want to know why is that, is it a bad thing?

Could have various reasons, such as a high learning rate, which could cause some divergence at the beginning of the epoch, and later on let your model converge again (as your validation loss is low).

You could check the predictions during training and check the loss for each batch to isolate it further e.g. to a “bad” sample.