bolt25

June 18, 2020, 11:17am

1

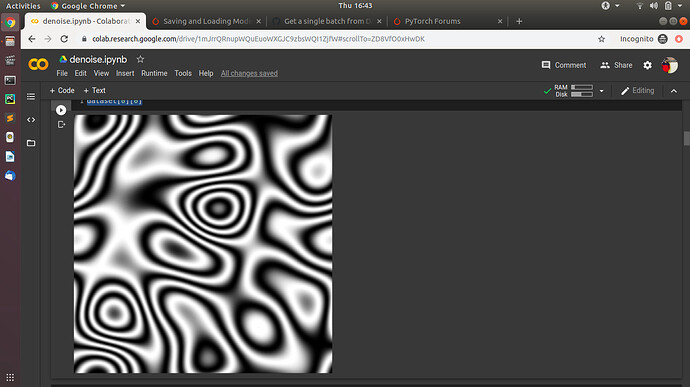

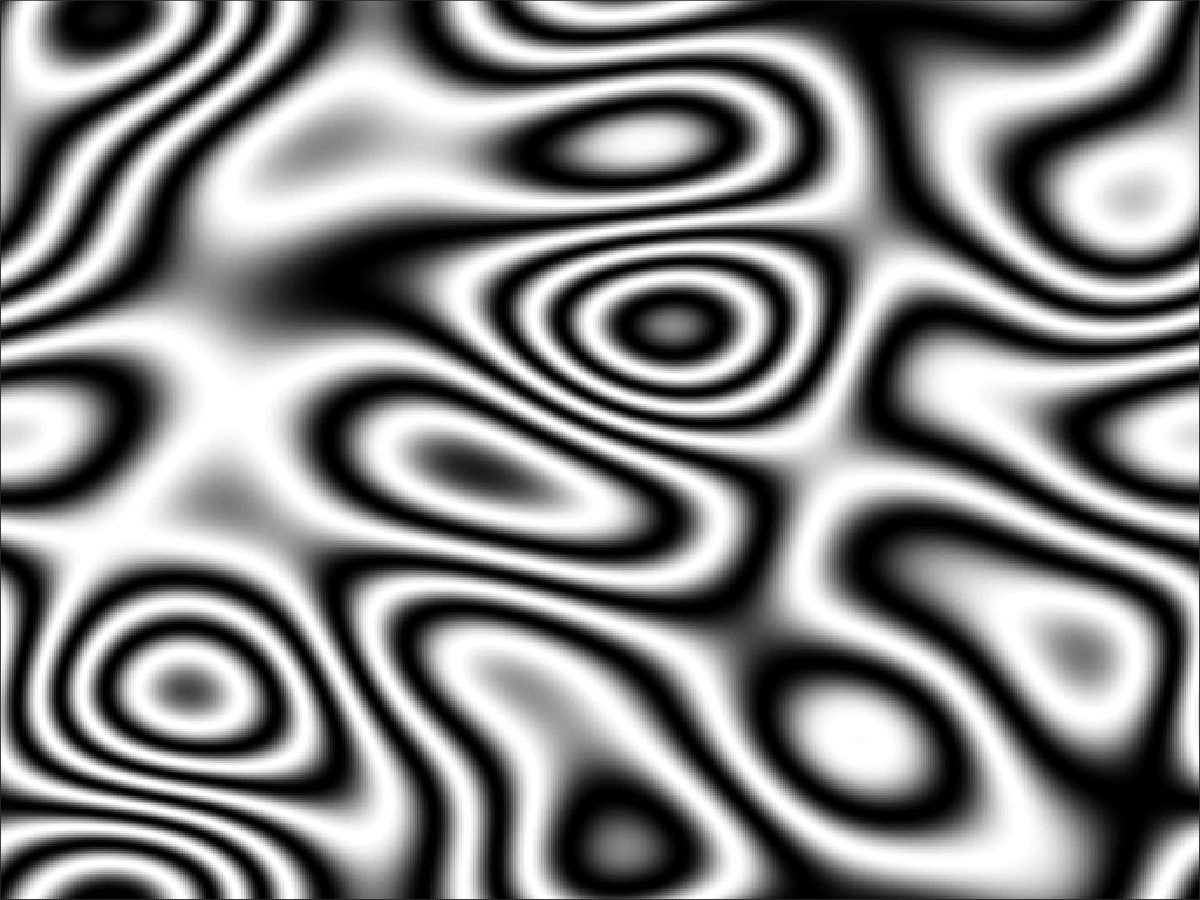

Im working on fringe pattern denoising and in that I have fringe patterns like:-

but when I apply the transform of ToTensor()

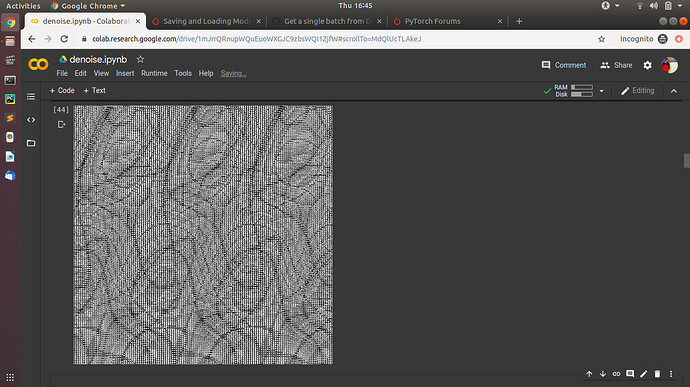

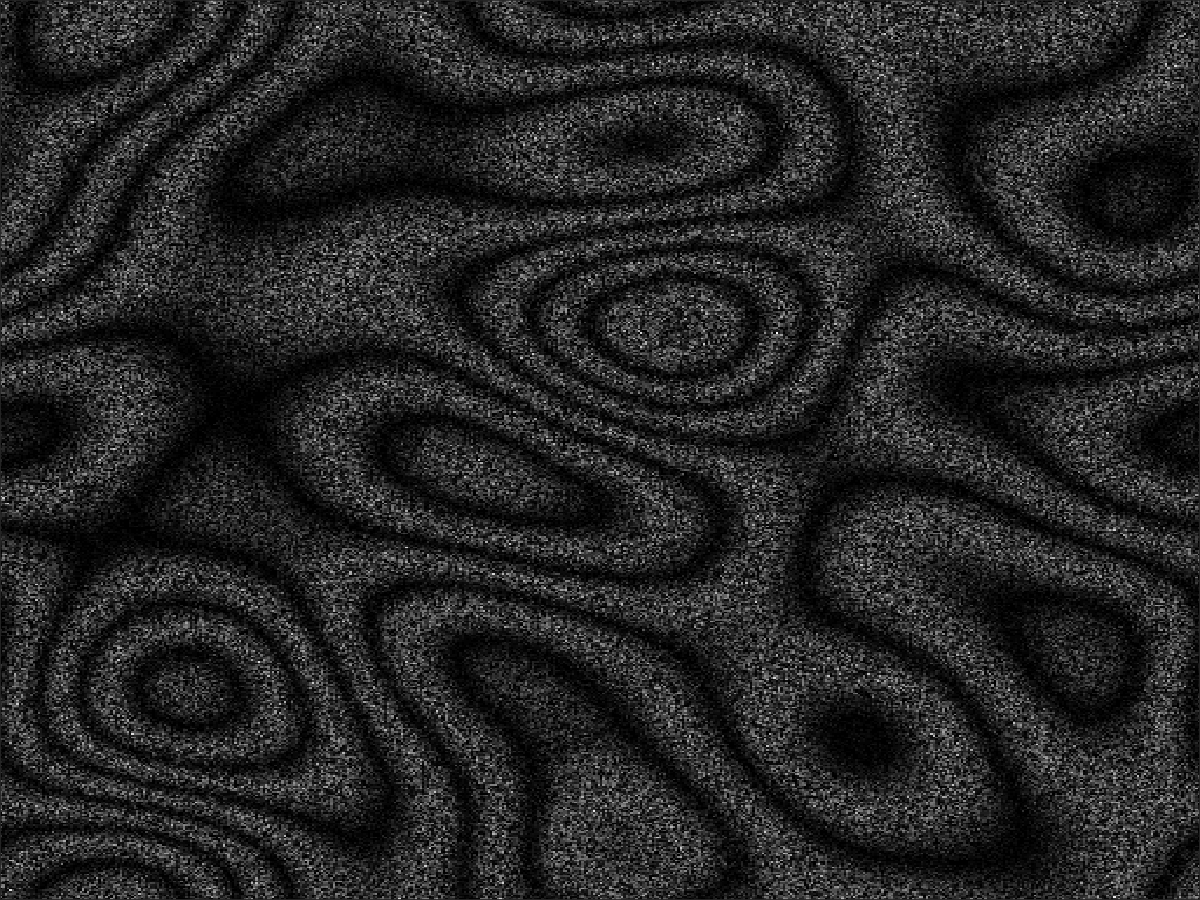

and then print the image, it turns so bad that my model isn’t learning anything:-

any suggestions on what can I do?

Nikronic

June 18, 2020, 7:02pm

2

Hi,

Can you share one of the images in your dataset and also the method to visualize transformed images?

It would be great also if you could combine your questions into single topic as they are highly correlated.

I have a dataset which needs to be denoised. When I was looking at the images of my dataloader which are fed into network I saw that the images from dataloader and images in my dataset are very different. Now the only transformation I used are resize and ToTensor().

Is it because of ToTensor()? Is it normalizing my image and is that causing my model to learn nothing?

Can I stop this?

Bests

bolt25

June 19, 2020, 3:36am

3

The above image is from dataset and the below image is after transform in the image mention in the above question.

Nikronic

June 19, 2020, 4:37am

4

I meant the original image file so I can test a few things.

bolt25

June 19, 2020, 5:17am

5

def dispImageFromModel(tensor):

img = tensor.cpu().detach().numpy()

img = img.reshape((512,512))

img, cb, cr = Image.fromarray(img, 'YCbCr').split()

return img

bolt25

June 19, 2020, 6:50am

6

For anyone who is looking for answers!

Thank you so so much!! This worked like a charm!!

Thank you! @Nikronic

1 Like