Hi,

This post is not specifically about a pytorch related issue but a general computer vision problem and I hope this is the correct place for such a discussion.

I am trying to classify a rather messy dataset of images. The images are taken using mobile phones and scanner scans from various sources so there is some variation in the dataset.

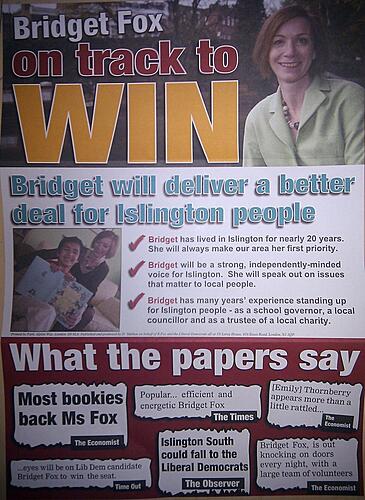

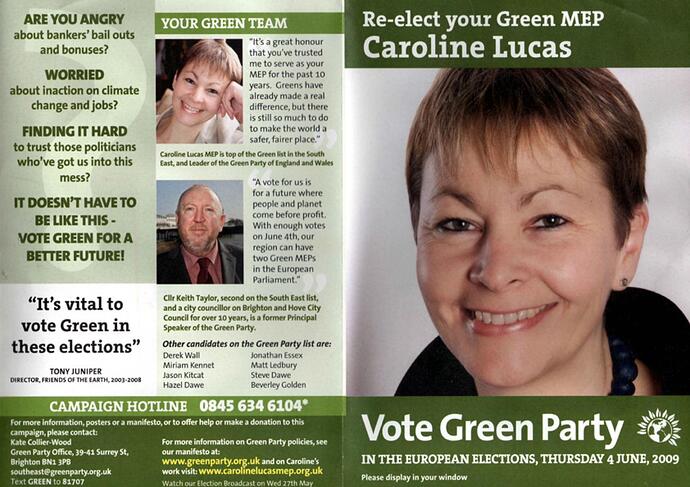

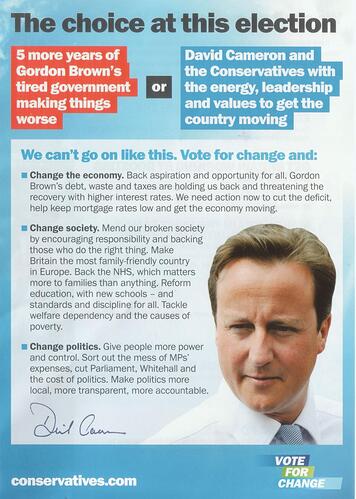

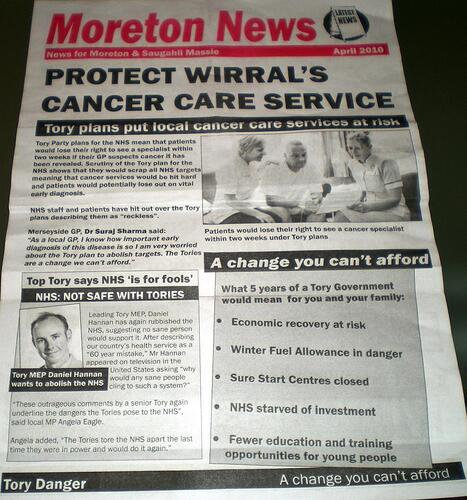

Its a dataset of election party flyers/leaflets, there are about 5 classes and each class/label has about 3000 images associated with it so there is enough variation here. Here are a couple examples of what the images look like:

As a starting point, I used a

ResNet 101 network to learn this dataset and for preprocessing all I did was resize the images to 200 x 200 using interpolation.

But after training for over 24 hours the validation/test accuracy is about 54%, so the network did not lean much and is just random guessing.

Is it possible to classify such a dataset? Any tips on how to pre-process these images to improve result?

Thanks