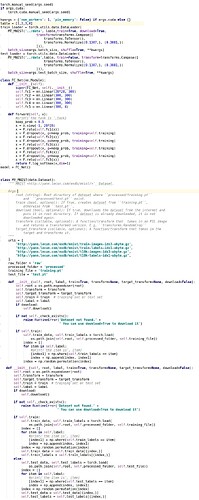

I implemented My_MINST to grab the data of corresponding classes.

There is a label input, when I set the label as [0,1,2,3] which means I want classes(or digits in MNIST) 0,1,2,3

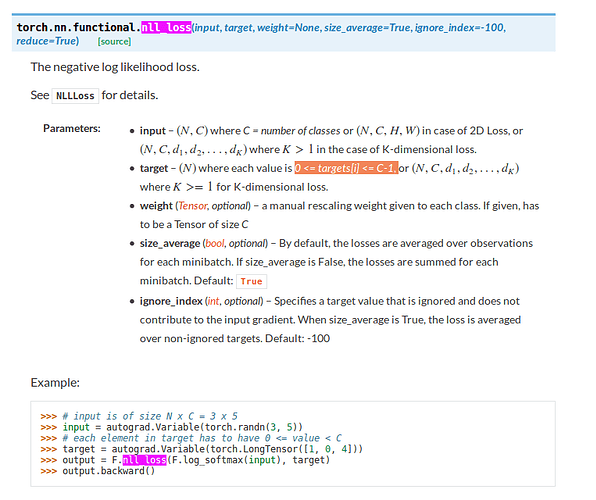

It works fine. But When I set the label as [1,2,3,4], I got the following error.When I set my label to [1,2,3,4]

I have to change my network to self.fc5 = nn.Linear(300, 5) to make my network works.

It seems like pytorch start as default from label 0.

THCudaCheck FAIL file=/pytorch/torch/lib/THC/THCTensorCopy.cu line=100 error=59 : device-side assert triggered

Traceback (most recent call last):

File “MINST.py”, line 132, in

train(epoch)

File “MINST.py”, line 104, in train

loss.backward()

File “/home/shixian/.local/lib/python3.5/site-packages/torch/autograd/variable.py”, line 167, in backward

torch.autograd.backward(self, gradient, retain_graph, create_graph, retain_variables)

File “/home/shixian/.local/lib/python3.5/site-packages/torch/autograd/init.py”, line 99, in backward

variables, grad_variables, retain_graph)

RuntimeError: cuda runtime error (59) : device-side assert triggered at /pytorch/torch/lib/THC/THCTensorCopy.cu:100

Here is my screenshot of my code

In My_MINST, I changed the init function