The following code

def test_add(x):

return torch.add(x, 1)

module = export(test_add, (torch.rand(4, 3),))

print(module)

generates an aten.add.Tensor operation, instead of an aten.add.Scalar

ExportedProgram:

class GraphModule(torch.nn.Module):

def forward(self, arg0_1: "f32[4, 3]"):

add: "f32[4, 3]" = torch.ops.aten.add.Tensor(arg0_1, 1); arg0_1 = None

return (add,)

Graph signature: ExportGraphSignature(input_specs=[InputSpec(kind=<InputKind.USER_INPUT: 1>, arg=TensorArgument(name='arg0_1'), target=None)], output_specs=[OutputSpec(kind=<OutputKind.USER_OUTPUT: 1>, arg=TensorArgument(name='add'), target=None)])

Range constraints: {}

Equality constraints: []

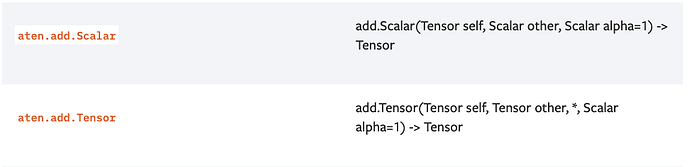

This in spite of the fact that aten.add.Tensor appears to take two tensors, as per its documentation:

Two questions:

- Under what conditions does

torch.exportgeneate anaten.add.Scalar? - More generally, if the type information in the IR documentation IRs — PyTorch main documentation doesn’t accurately capture the types that can appear in ATen operations, is there another source that does?