I am trying to use Deep Image Prior for denoising image when i change the net to texture_nets i am having this error. need help

code:

!git clone https://github.com/DmitryUlyanov/deep-image-prior

!mv deep-image-prior/* ./

from __future__ import print_function

import matplotlib.pyplot as plt

%matplotlib inline

import os

#os.environ['CUDA_VISIBLE_DEVICES'] = '3'

import numpy as np

from models import *

import torch

import torch.optim

#evaluation metric

from skimage.measure import compare_psnr

from utils.denoising_utils import *

torch.backends.cudnn.enabled = True

torch.backends.cudnn.benchmark =True

dtype = torch.cuda.FloatTensor

imsize =-1

PLOT = True

sigma = 25

sigma_ = sigma/255.

fname = '/content/dog3.jpg' #load image

# Add synthetic noise

img_pil = crop_image(get_image(fname, imsize)[0], d=32)

img_np = pil_to_np(img_pil) #convert PIL Image to numpy array from w*h*c -> c*w*h

img_noisy_pil, img_noisy_np = get_noisy_image(img_np, sigma_) # adding noise to original image

if PLOT:

print('Original Image: ')

plot_image_grid([img_np], 1, 8);

print('\nNoised Image: ')

plot_image_grid([img_noisy_np], 1, 8);

INPUT = 'noise' # 'meshgrid' get_noise function

pad = 'reflection'

OPT_OVER = 'net' # 'net,input'

reg_noise_std = 1./30. # set to 1./20. for sigma=50

LR = 0.01

OPTIMIZER='adam' # 'LBFGS'

show_every = 100

exp_weight=0.99

num_iter = 1000

input_depth = 3

figsize = 4

net = get_net(input_depth, 'texture_nets', pad, upsample_mode='bilinear').type(dtype)

net_input = get_noise(input_depth, INPUT, (img_pil.size[1], img_pil.size[0])).type(dtype).detach()

# Compute number of parameters

s = sum([np.prod(list(p.size())) for p in net.parameters()]);

print ('Number of params: %d' % s)

# Loss

mse = torch.nn.MSELoss().type(dtype)

img_noisy_torch = np_to_torch(img_noisy_np).type(dtype)

net_input_saved = net_input.detach().clone()

noise = net_input.detach().clone()

out_avg = None

last_net = None

psrn_noisy_last = 0

loss = []

i = 0

def closure():

global i, out_avg, psrn_noisy_last, last_net, net_input, loss

if reg_noise_std > 0:

net_input = net_input_saved + (noise.normal_() * reg_noise_std) #changing the input to the netwok

out = net(net_input)

# Smoothing

if out_avg is None:

out_avg = out.detach()

else:

out_avg = out_avg * exp_weight + out.detach() * (1 - exp_weight) # calculating average network output

total_loss = mse(out, img_noisy_torch)

total_loss.backward()

loss.append(total_loss.item())

# caculating psrn

psrn_noisy = compare_psnr(img_noisy_np, out.detach().cpu().numpy()[0]) # comparing psnr for the output image and the actual noisy image

psrn_gt = compare_psnr(img_np, out.detach().cpu().numpy()[0]) # comparing psnr for the output image and the original image

psrn_gt_sm = compare_psnr(img_np, out_avg.detach().cpu().numpy()[0]) # comparing psnr for the output average and the original image

if PLOT and i % show_every == 0:

out_np = torch_to_np(out)

# plotting the output image along the average image calculated

print(f'\n\nAfter {i} iterations: ')

print ('Iteration %05d Loss %f PSNR_noisy: %f PSRN_gt: %f PSNR_gt_sm: %f' % (i, total_loss.item(), psrn_noisy, psrn_gt, psrn_gt_sm), '\r', end='\n')

plot_image_grid([np.clip(out_np, 0, 1),

np.clip(torch_to_np(out_avg), 0, 1)], factor=figsize, nrow=1)

# Backtracking

if i % show_every:

if psrn_noisy - psrn_noisy_last < -5:

print('Falling back to previous checkpoint.')

for new_param, net_param in zip(last_net, net.parameters()):

net_param.data.copy_(new_param.cuda())

return total_loss*0

else:

last_net = [x.detach().cpu() for x in net.parameters()]

psrn_noisy_last = psrn_noisy

i += 1

return total_loss

p = get_params(OPT_OVER, net, net_input)

optimize(OPTIMIZER, p, closure, LR, num_iter)

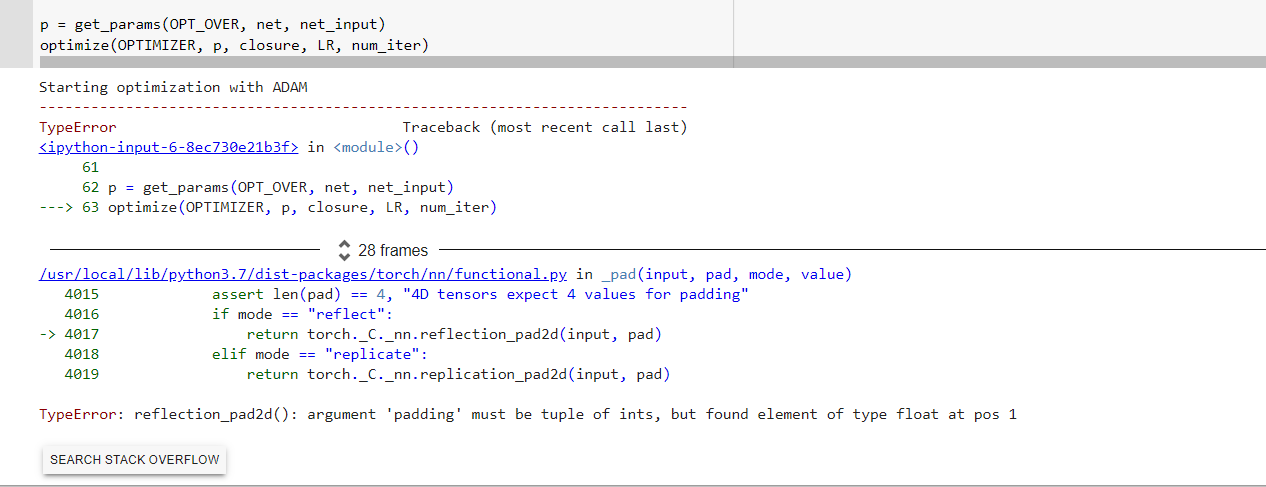

Starting optimization with ADAM

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

<ipython-input-6-8ec730e21b3f> in <module>()

61

62 p = get_params(OPT_OVER, net, net_input)

---> 63 optimize(OPTIMIZER, p, closure, LR, num_iter)

28 frames

/usr/local/lib/python3.7/dist-packages/torch/nn/functional.py in _pad(input, pad, mode, value)

4015 assert len(pad) == 4, "4D tensors expect 4 values for padding"

4016 if mode == "reflect":

-> 4017 return torch._C._nn.reflection_pad2d(input, pad)

4018 elif mode == "replicate":

4019 return torch._C._nn.replication_pad2d(input, pad)

TypeError: reflection_pad2d(): argument 'padding' must be tuple of ints, but found element of type float at pos 1