I am trying to build a shared library to then call it from python scripts using ctypes. I had everything working with no cuda support, but did not manage to make it work on the GPU. Below is a minimal example:

I downloaded libtorch-win-shared-with-deps-1.13.1+cu117 from pytorch.org and unzipped it to C: I then used the following cmake files to build both an executable and a shared library:

cmake_minimum_required(VERSION 3.0 FATAL_ERROR)

list(APPEND CMAKE_PREFIX_PATH "C:\\libtorch")

project(example-app)

find_package(Torch REQUIRED)

set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} ${TORCH_CXX_FLAGS}")

add_library(example-lib SHARED example-app.cpp example-app.h)

target_link_libraries(example-lib "${TORCH_LIBRARIES}")

set_property(TARGET example-lib PROPERTY CXX_STANDARD 14)

add_executable(example-app example-app.cpp example-app.h)

target_link_libraries(example-app "${TORCH_LIBRARIES}")

set_property(TARGET example-app PROPERTY CXX_STANDARD 14)

if (MSVC)

file(GLOB TORCH_DLLS "${TORCH_INSTALL_PREFIX}/lib/*.dll")

add_custom_command(TARGET example-app

POST_BUILD

COMMAND ${CMAKE_COMMAND} -E copy_if_different

${TORCH_DLLS}

$<TARGET_FILE_DIR:example-app>)

endif (MSVC)

The example-app.cpp script is

#include <torch/torch.h>

#include <iostream>

#include "example-app.h"

int test() {

torch::Tensor tensor = torch::rand({3, 2});

std::cout << tensor << std::endl;

return 0;

}

int main() {

test();

return 0;

}

and the header

extern "C" { __declspec(dllexport) int test(); }

I then want to call test in a python script with

import os

import pathlib

import ctypes

libname = pathlib.Path("C:/Users/Matrix/PycharmProjects/torch_test/buil/Release")

os.add_dll_directory(str(libname))

c_lib = ctypes.CDLL(str(libname / "example-lib.dll"), winmode=0)

print(c_lib.test())

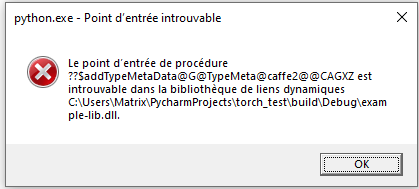

But I get the following error Procedure entry point could not be located in the dynamic link library

(sorry for the french):

Note that calling the executable works as desired. Any idea what could be causing the problem?

Configurations: Windows 10, MSVC, CUDA 11.7

Thanks for any help