Hi, I build PyTorch from source by

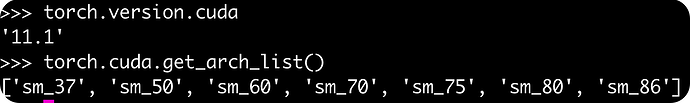

TORCH_CUDA_ARCH_LIST="3.7 5.0 6.0 7.0 7.5 8.0 8.6" python setup.py install

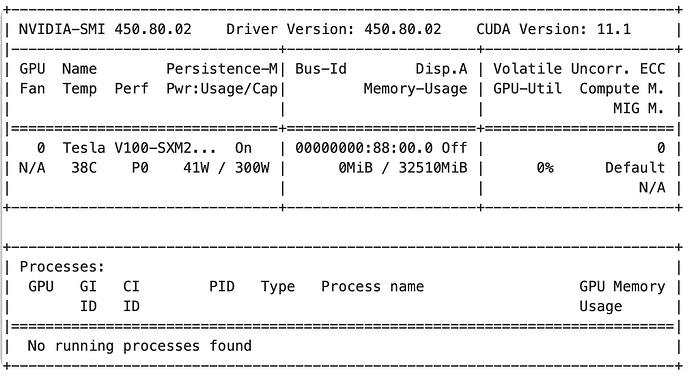

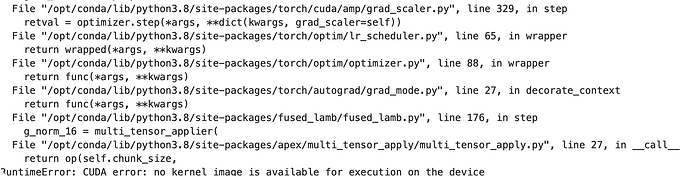

But why it can not run on V100 ? This pytorch version can run in A100 machine. And I notice that the RuntimeError happened in optimization step given by Apex, and this is how I install apex after pytorch

git clone https://github.com/NVIDIA/apex

cd apex

pip install -v --no-cache-dir --global-option="--cpp_ext" --global-option="--cuda_ext" ./

This seems to be relevant with Apex installation. I found this, and I am trying

opened 01:56PM - 15 Nov 19 UTC

After a GPU tensor goes through FusedLayerNorm, the next time that memory is acc… essed I get a `RuntimeError: CUDA error: no kernel image is available for execution on the device`.

To reproduce:

```python

import torch

from apex.normalization import FusedLayerNorm

norm = FusedLayerNorm(16)

device = torch.device('cuda:' + str(0))

norm = norm.to(device)

x = torch.randn(3, 4, 16)

x = x.to(device)

attended = norm(x)

print(x)

```

Other operations on `attended` or `x` will also raise the error. However, if I move `x` to the CPU, I can then proceed to use it without any problems.

I'm running this on an AWS p3.2xlarge instance based on the [AWS Deep Learning AMI (Ubuntu 18.04) Version 25.0](https://aws.amazon.com/releasenotes/aws-deep-learning-ami-ubuntu-18-04-version-25-0/?tag=releasenotes%23keywords%23aws-deep-learning-amis). We've updated pytorch to 1.3.0, and installed GPUtil, Apex, and gpustat using the following commands:

```

source activate pytorch_p36

# Update to the latest PyTorch 1.3 (but not CUDA 10.0 instead of 10.1, because the AMI/env doesn't have it installed)

conda install pytorch==1.3.0 torchvision==0.4.1 cudatoolkit=10.0 -c pytorch -y

# Install GPUtil

pip install GPUtil

# Install NVIDIA Apex

git clone https://github.com/NVIDIA/apex

pip install -v --no-cache-dir --global-option="--cpp_ext" --global-option="--cuda_ext" ./

# Install gpustat

pip install gpustat

```

Doing the same thing on an aws p2.xlarge instance with the same changes to the environment does not cause the error.

I guess you haven’t specified the architectures while building apex so would need to rebuild it.

Yes ! Exactly, after specifying the architectures when building apex, it works! Thank you