I am training a Siamese Network using Resnet50 pre-trained network. I have modified the fully connected layer at the end with a 3 layer classifier and frozen all of the resnet layers before the adaptive average pooling.

The loss function I use is below:

class TripletLoss(nn.Module):

def __init__(self, embedding_size):

super(TripletLoss, self).__init__()

self.margin = embedding_size

def forward(self, anchor, positive, negative):

dp = (anchor - positive).pow(2).sum(1)

dn = (anchor - negative).pow(2).sum(1)

losses = F.relu(dp - dn + self.margin)

acc = (losses == 0.0).type(torch.float32).sum()

return losses.sum(), acc

The network is trained on 1400 triplets, and 150 triplets are used for validation.

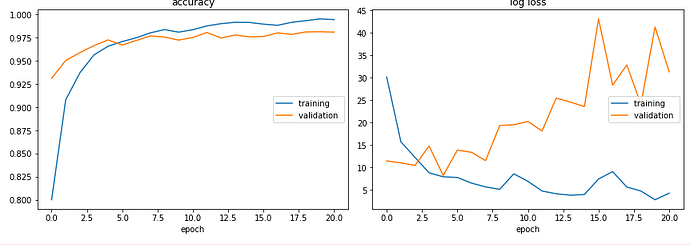

Below is the plot for the loss and accuracy. I am very confused about how to interpret this as the validation loss increases after epoch 5 but the validation accuracy either remains the same or increases slightly. Would this be a good model as the accuracy is high? Or is something wrong?

Any comments or suggestions to improve training would be appreciated.