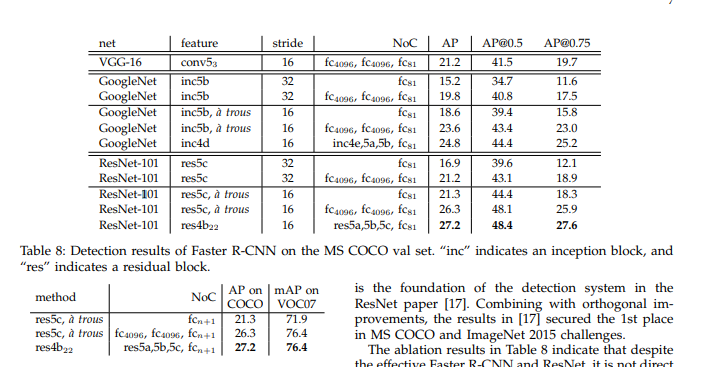

When i turn to the resnet source code in torchvision.model

model = ResNet(Bottleneck, [3, 4, 23, 3], **kwargs)

And the constructor of Resnet

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3,

bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

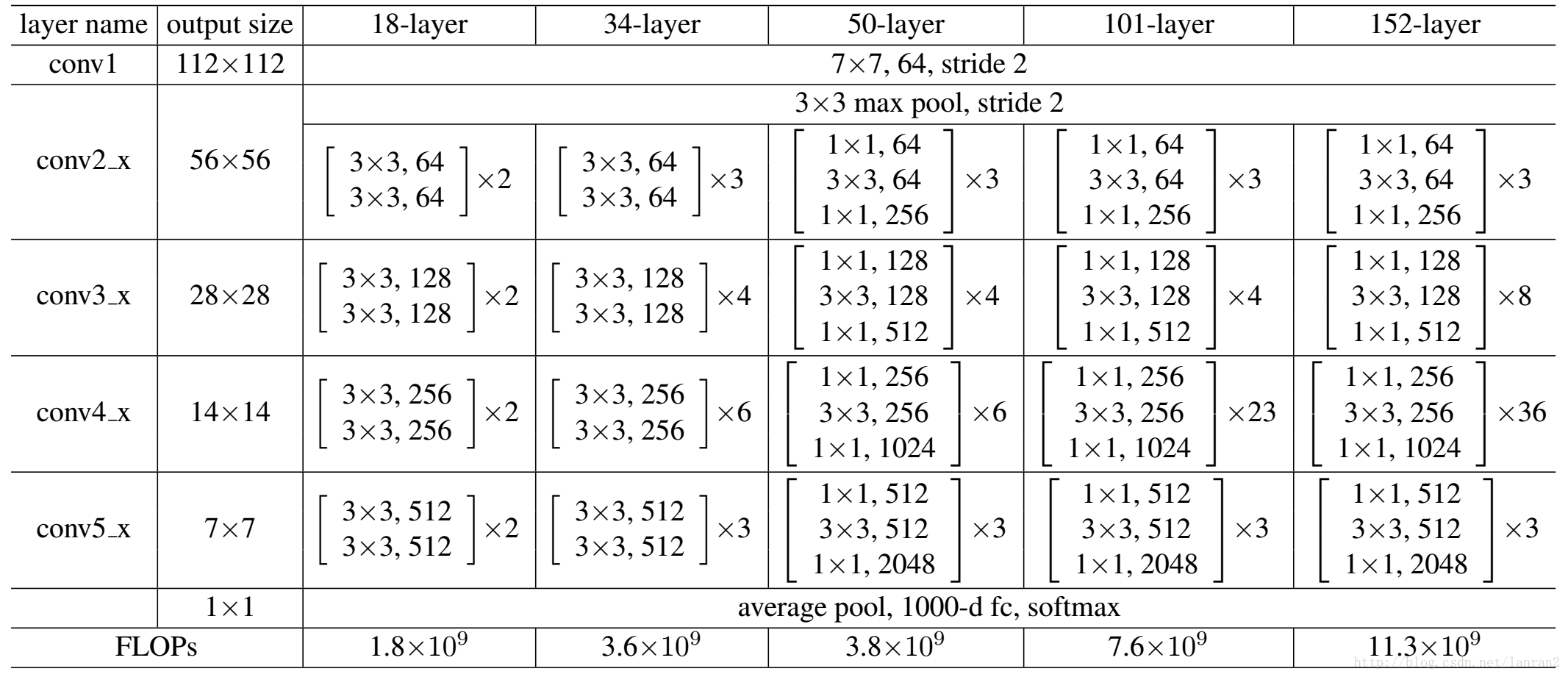

Compared to this image, it seems that the layer4 is corresponding with conv5

So, how should i perform fine-tune mentioned in the paper on pytorch resnet101 model?

Is the “res4b22” corresponding to

list(model.layer3.children())[-1]

What about the “res5a”, “res5b” and “res5c”, Are they corresponding to the 3 Bottleneck of conv5_x?