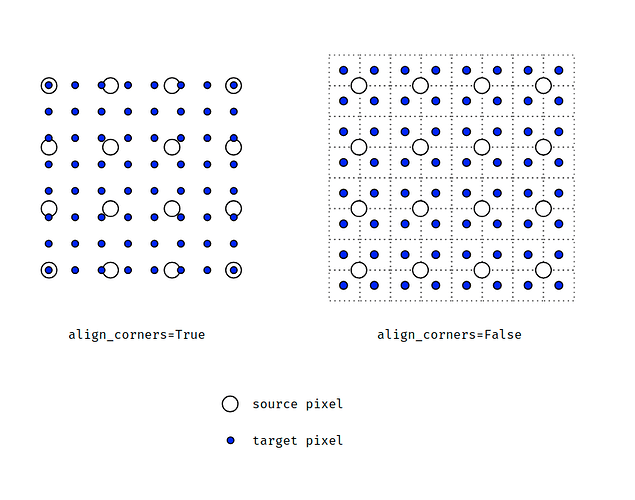

Here is a simple illustration I made showing how a 4x4 image is upsampled to 8x8.

When align_corners=True, pixels are regarded as a grid of points. Points at the corners are aligned.

When align_corners=False, pixels are regarded as 1x1 areas. Area boundaries, rather than their centers, are aligned.