When I use DDP package to train imagenet, there are always OOM problem.

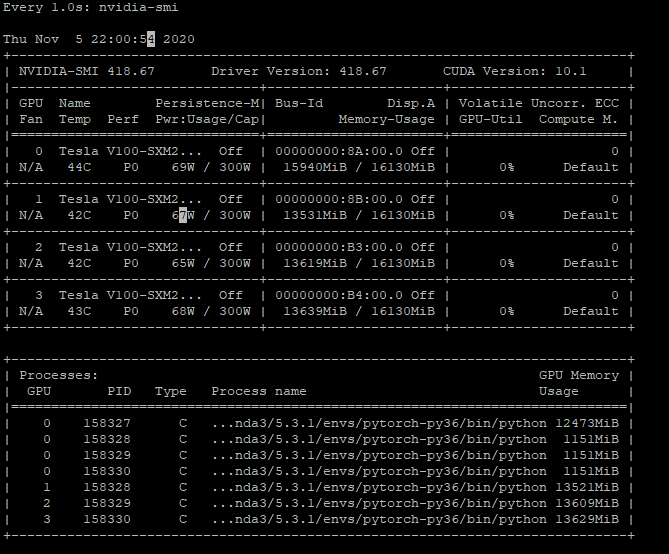

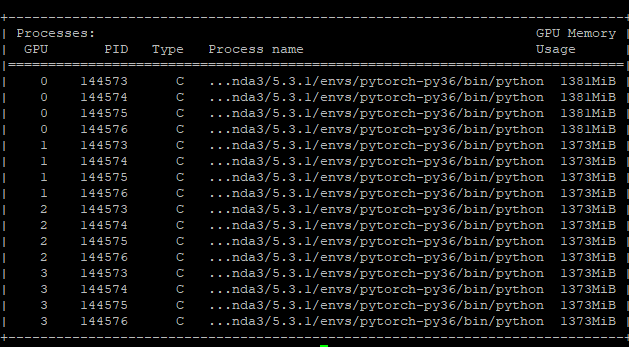

I check the GPU utilization and I found there are many processes on each GPU ?

What is the reason and how can I avoid this problem ?

This shouldn’t happen and each process should use one GPU and thus create one CUDA context.

Are you calling CUDA operations on all devices in the script or did you write device-agnostic code, which only uses a single GPU?

1 Like

Thanks for your reply!

I have four gpu in my node and I do training in one node. The previous problem was that I set the device incorrectly, I set device = torch.device('cuda') instead of device = torch.device('cuda:{}'.format(args.local_rank)).

Now the gpu0 has 4 processes and the other has only one process

This is my script:

import argparse

import os

import random

import shutil

import time

import warnings

import datetime

import torch

import torch.nn as nn

import torch.nn.parallel

import torch.backends.cudnn as cudnn

import torch.distributed as dist

import torch.optim

import torch.utils.data

import torch.utils.data.distributed

import torchvision.transforms as transforms

import torchvision.datasets as datasets

import torchvision.models as models

import Inceptionv3Net as Incepv3

model_names = sorted(name for name in models.__dict__

if name.islower() and not name.startswith("__")

and callable(models.__dict__[name]))

parser = argparse.ArgumentParser(description='PyTorch ImageNet Training')

parser.add_argument('data', metavar='DIR',

help='path to dataset')

parser.add_argument('-a', '--arch', metavar='ARCH', default='resnet18',

choices=model_names,

help='model architecture: ' +

' | '.join(model_names) +

' (default: resnet18)')

parser.add_argument('-j', '--workers', default=4, type=int, metavar='N',

help='number of data loading workers (default: 4)')

parser.add_argument('--epochs', default=90, type=int, metavar='N',

help='number of total epochs to run')

parser.add_argument('--start-epoch', default=0, type=int, metavar='N',

help='manual epoch number (useful on restarts)')

parser.add_argument('-b', '--batch-size', default=96, type=int,

metavar='N',

help='mini-batch size (default: 256), this is the total '

'batch size of all GPUs on the current node when '

'using Data Parallel or Distributed Data Parallel')

parser.add_argument('--lr', '--learning-rate', default=0.01, type=float,

metavar='LR', help='initial learning rate', dest='lr')

parser.add_argument('--momentum', default=0.9, type=float, metavar='M',

help='momentum')

parser.add_argument('--wd', '--weight-decay', default=1e-4, type=float,

metavar='W', help='weight decay (default: 1e-4)',

dest='weight_decay')

parser.add_argument('-p', '--print-freq', default=10, type=int,

metavar='N', help='print frequency (default: 10)')

parser.add_argument('--resume', default='', type=str, metavar='PATH',

help='path to latest checkpoint (default: none)')

parser.add_argument('-e', '--evaluate', dest='evaluate', action='store_true',

help='evaluate model on validation set')

parser.add_argument('--pretrained', dest='pretrained', action='store_true',

help='use pre-trained model')

parser.add_argument('--world-size', default=-1, type=int,

help='number of nodes for distributed training')

parser.add_argument('--rank', default=-1, type=int,

help='node rank for distributed training')

parser.add_argument('--dist-url', default='tcp://224.66.41.62:23456', type=str,

help='url used to set up distributed training')

parser.add_argument('--backend', default='gloo', type=str,

help='distributed backend')

parser.add_argument('--seed', default=None, type=int,

help='seed for initializing training. ')

parser.add_argument('--gpu', default=None, type=int,

help='GPU id to use.')

parser.add_argument('--multiprocessing-distributed', action='store_true',

help='Use multi-processing distributed training to launch '

'N processes per node, which has N GPUs. This is the '

'fastest way to use PyTorch for either single node or '

'multi node data parallel training')

parser.add_argument("--local_rank", type=int)

parser.add_argument('--no-cuda',action='store_true',default=False,help='disable cuda')

parser.add_argument('--nproc-per-node',default=4,type=int, help='nproc_per_node')

global best_acc1, args

best_acc1 = 0

args = parser.parse_args()

def main():

global best_acc1, args

local_rank = args.local_rank

if args.seed is not None:

random.seed(args.seed)

torch.manual_seed(args.seed)

cudnn.deterministic = True

warnings.warn('You have chosen to seed training. '

'This will turn on the CUDNN deterministic setting, '

'which can slow down your training considerably! '

'You may see unexpected behavior when restarting '

'from checkpoints.')

use_cuda = not args.no_cuda and torch.cuda.is_available()

gpu = "cuda:{}".format(args.local_rank)

device = torch.device(gpu if use_cuda else "cpu")

print("=> using",device)

print("From Node:",torch.distributed.get_rank(),"The Local Rank:",local_rank,'\n')

# create model

if args.pretrained:

print("=> using pre-trained model '{}'".format(args.arch))

model = models.__dict__[args.arch]()

if args.gpu is None:

pre = torch.load('./pretrained/inception_v3_google-1a9a5a14.pth', map_location=lambda storage, loc: storage)

else:

loc = 'cuda:{}'.format(args.gpu)

pre = torch.load('./pretrained/inception_v3_google-1a9a5a14.pth', map_location=loc)

model.load_state_dict(pre)

#model.aux_logits = False

#model = Incepv3.InceptionV3Net(pretrained=args.pretrained)

else:

print("=> creating model '{}'".format(args.arch))

model = models.__dict__[args.arch]()

#model.aux_logits = False

#model = Incepv3.InceptionV3Net(pretrained=args.pretrained)

# optionally resume from a checkpoint

if args.resume:

if os.path.isfile(args.resume):

print("=> loading checkpoint '{}'".format(args.resume))

if args.gpu is None:

checkpoint = torch.load(args.resume)

else:

# Map model to be loaded to specified single gpu.

loc = 'cuda:{}'.format(args.gpu)

checkpoint = torch.load(args.resume, map_location=loc)

args.start_epoch = checkpoint['epoch']

best_acc1 = checkpoint['best_acc1']

if args.gpu is not None:

# best_acc1 may be from a checkpoint from a different GPU

best_acc1 = best_acc1.to(args.gpu)

model.load_state_dict(checkpoint['state_dict'])

optimizer.load_state_dict(checkpoint['optimizer'])

print("=> loaded checkpoint '{}' (epoch {})"

.format(args.resume, checkpoint['epoch']))

else:

print("=> no checkpoint found at '{}'".format(args.resume))

cudnn.benchmark = True

# Load model

model.to(device)

model = torch.nn.parallel.DistributedDataParallel(model, device_ids=[args.local_rank], find_unused_parameters=True)

criterion = nn.CrossEntropyLoss().to(device)

#ptimizer = torch.optim.SGD(model.parameters(), args.lr,momentum=args.momentum,weight_decay=args.weight_decay)

optimizer = torch.optim.RMSprop(model.parameters(), lr=args.lr, alpha=0.9, momentum=args.momentum, eps=1.0, weight_decay=args.weight_decay)

# Data loading code

input_size = 299

traindir = os.path.join(args.data, 'train')

valdir = os.path.join(args.data, 'val')

normalize = transforms.Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225])

train_dataset = datasets.ImageFolder(

traindir,

transforms.Compose([

transforms.RandomResizedCrop(input_size),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

normalize,

]))

train_sampler = torch.utils.data.distributed.DistributedSampler(train_dataset)

train_loader = torch.utils.data.DataLoader(

train_dataset, batch_size=args.batch_size, shuffle=(train_sampler is None),

num_workers=args.workers, pin_memory=True, sampler=train_sampler)

val_loader = torch.utils.data.DataLoader(

datasets.ImageFolder(valdir, transforms.Compose([

transforms.Resize(input_size),

transforms.CenterCrop(input_size),

transforms.ToTensor(),

normalize,

])),

batch_size=args.batch_size, shuffle=False,

num_workers=args.workers, pin_memory=True)

for epoch in range(args.start_epoch, args.epochs):

train_sampler.set_epoch(epoch)

adjust_learning_rate(optimizer, epoch, args)

# train for one epoch

train(train_loader, model, criterion, optimizer, epoch, args, device, True)

# evaluate on validation set

acc1 = validate(val_loader, model, criterion, args, device, False)

# remember best acc@1 and save checkpoint

is_best = acc1 > best_acc1

best_acc1 = max(acc1, best_acc1)

if not args.multiprocessing_distributed or (args.multiprocessing_distributed

and args.rank % args.nproc_per_node == 0):

save_checkpoint({

'epoch': epoch + 1,

'arch': args.arch,

'state_dict': model.state_dict(),

'best_acc1': best_acc1,

'optimizer' : optimizer.state_dict(),

}, is_best)

def train(train_loader, model, criterion, optimizer, epoch, args, device, is_inception=False):

batch_time = AverageMeter('Time', ':6.3f')

data_time = AverageMeter('Data', ':6.3f')

losses = AverageMeter('Loss', ':.4e')

top1 = AverageMeter('Acc@1', ':6.2f')

top5 = AverageMeter('Acc@5', ':6.2f')

progress = ProgressMeter(

len(train_loader),

[batch_time, data_time, losses, top1, top5],

prefix="Epoch: [{}]".format(epoch))

# switch to train mode

model.train()

end = time.time()

for i, (images, target) in enumerate(train_loader):

# measure data loading time

data_time.update(time.time() - end)

images, target = images.to(device, non_blocking=True), target.to(device, non_blocking=True)

# compute output

if is_inception:

output, aux_output = model(images)

loss1 = criterion(output,target)

loss2 = criterion(aux_output,target)

loss = loss1 + 0.4 * loss2

else:

output = model(images)

loss = criterion(output, target)

# measure accuracy and record loss

acc1, acc5 = accuracy(output, target, topk=(1, 5))

losses.update(loss.item(), images.size(0))

top1.update(acc1[0], images.size(0))

top5.update(acc5[0], images.size(0))

# compute gradient and do SGD step

optimizer.zero_grad()

loss.backward()

optimizer.step()

# measure elapsed time

batch_time.update(time.time() - end)

end = time.time()

if i % args.print_freq == 0:

progress.display(i)

def validate(val_loader, model, criterion, args, device, is_inception=False):

batch_time = AverageMeter('Time', ':6.3f')

losses = AverageMeter('Loss', ':.4e')

top1 = AverageMeter('Acc@1', ':6.2f')

top5 = AverageMeter('Acc@5', ':6.2f')

progress = ProgressMeter(

len(val_loader),

[batch_time, losses, top1, top5],

prefix='Test: ')

# switch to evaluate mode

model.eval()

with torch.no_grad():

end = time.time()

for i, (images, target) in enumerate(val_loader):

images, target = images.to(device, non_blocking=True), target.to(device, non_blocking=True)

# compute output

if is_inception:

output, aux_output = model(images)

loss1 = criterion(output, target)

loss2 = criterion(aux_output, target)

loss = loss1 + 0.4 * loss2

else:

output = model(images)

loss = criterion(output, target)

# measure accuracy and record loss

acc1, acc5 = accuracy(output, target, topk=(1, 5))

losses.update(loss.item(), images.size(0))

top1.update(acc1[0], images.size(0))

top5.update(acc5[0], images.size(0))

# measure elapsed time

batch_time.update(time.time() - end)

end = time.time()

if i % args.print_freq == 0:

progress.display(i)

# TODO: this should also be done with the ProgressMeter

print(' * Acc@1 {top1.avg:.3f} Acc@5 {top5.avg:.3f}'

.format(top1=top1, top5=top5))

return top1.avg

def save_checkpoint(state, is_best, filename='checkpoint.pth.tar'):

torch.save(state, filename)

if is_best:

shutil.copyfile(filename, 'model_best.pth.tar')

class AverageMeter(object):

"""Computes and stores the average and current value"""

def __init__(self, name, fmt=':f'):

self.name = name

self.fmt = fmt

self.reset()

def reset(self):

self.val = 0

self.avg = 0

self.sum = 0

self.count = 0

def update(self, val, n=1):

self.val = val

self.sum += val * n

self.count += n

self.avg = self.sum / self.count

def __str__(self):

fmtstr = '{name} {val' + self.fmt + '} ({avg' + self.fmt + '})'

return fmtstr.format(**self.__dict__)

class ProgressMeter(object):

def __init__(self, num_batches, meters, prefix=""):

self.batch_fmtstr = self._get_batch_fmtstr(num_batches)

self.meters = meters

self.prefix = prefix

def display(self, batch):

entries = [self.prefix + self.batch_fmtstr.format(batch)]

entries += [str(meter) for meter in self.meters]

print('\t'.join(entries))

def _get_batch_fmtstr(self, num_batches):

num_digits = len(str(num_batches // 1))

fmt = '{:' + str(num_digits) + 'd}'

return '[' + fmt + '/' + fmt.format(num_batches) + ']'

def adjust_learning_rate(optimizer, epoch, args):

"""Sets the learning rate to the initial LR decayed by 10 every 30 epochs"""

lr = args.lr * (0.1 ** (epoch // 30))

for param_group in optimizer.param_groups:

param_group['lr'] = lr

def accuracy(output, target, topk=(1,)):

"""Computes the accuracy over the k top predictions for the specified values of k"""

with torch.no_grad():

maxk = max(topk)

batch_size = target.size(0)

_, pred = output.topk(maxk, 1, True, True)

pred = pred.t()

correct = pred.eq(target.view(1, -1).expand_as(pred))

res = []

for k in topk:

correct_k = correct[:k].reshape(-1).float().sum(0, keepdim=True)

res.append(correct_k.mul_(100.0 / batch_size))

return res

if __name__ == '__main__':

print('Use {back} as backend.'.format(back=args.backend))

dist.init_process_group(backend=args.backend, init_method='env://', timeout=datetime.timedelta(seconds=1000))

main()