Hello,

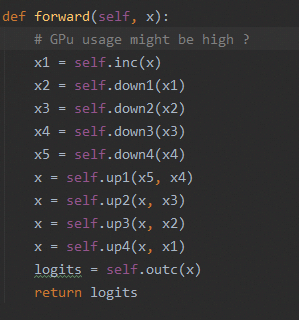

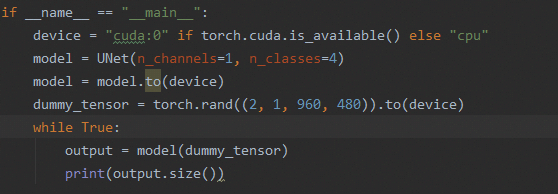

I used a UNet model for image segementation task. After I built up the model , I tried to use a dummy tensor to test the forward() process of the model. The code script is shown below:

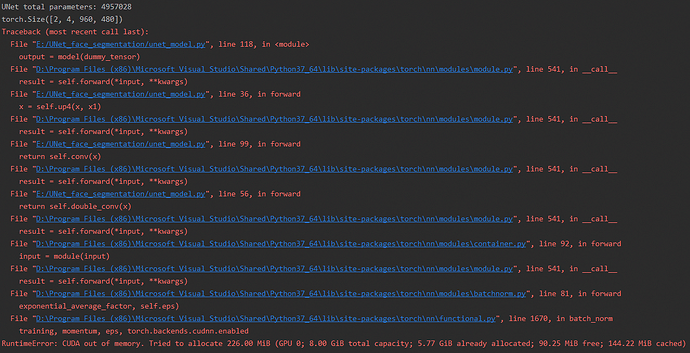

However, after the first iteration when console output the size of output tensor, I met a GPU OOM error.

Is this caused by I create too much tensor variables during the forward() method of UNet?