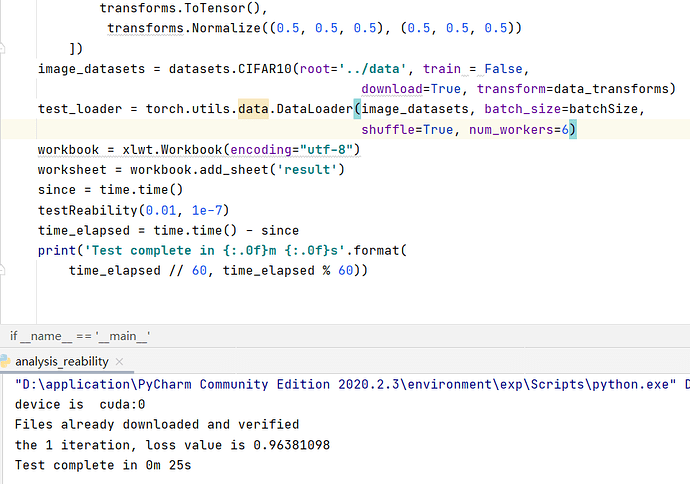

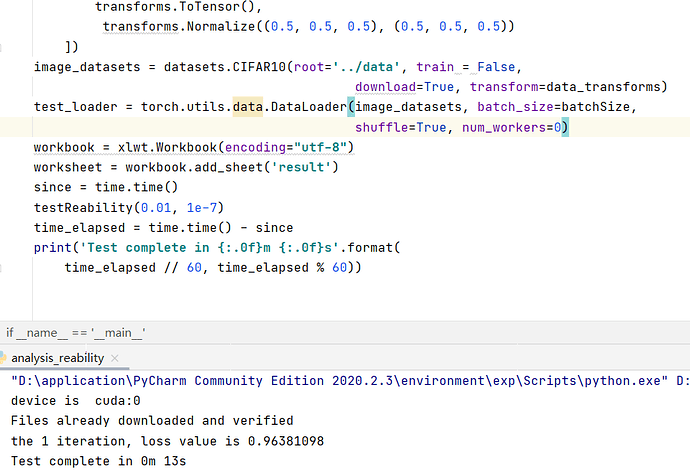

I think the more num_works, the time cost for inference should be less. But the result from my code is in opposite. I use trained resnet-18 to inference the test set. When the num_work=0, the time cost is around 12s, but when num_work =6, the time is around 25s.

Below is my code.

import torch

from torchvision import datasets, transforms

import time

import torch.nn.functional as F

def testReability():

lossSum = 0.0

lossNumebr = 0

for i in range(1):

model = torch.load('resnet_18-cifar_10.pth')

model.to(device)

model.eval()

for data, target in test_loader:

data = data.to(device)

target = target.to(device)

output = model(data)

lossSum = lossSum + F.cross_entropy(output, target, reduction='sum').item()

lossNumebr = lossNumebr + len(test_loader) * batchSize

curLoss = lossSum / lossNumebr

print("the {} iteration, loss value is {:.8f}".format(i+1, curLoss))

if __name__ == '__main__':

batchSize=10

device = torch.device('cuda:0' if torch.cuda.is_available() else 'cpu')

print("device is ", device)

data_transforms = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

])

image_datasets = datasets.CIFAR10(root='../data', train = False,

download=True, transform=data_transforms)

test_loader = torch.utils.data.DataLoader(image_datasets, batch_size=batchSize,

shuffle=True, num_workers=0)

since = time.time()

testReability()

time_elapsed = time.time() - since

print('Test complete in {:.0f}m {:.0f}s'.format(

time_elapsed // 60, time_elapsed % 60))

There are my results.