I have the following code snippet in the train cell:

network = Network()

network.cuda()

criterion = nn.MSELoss()

optimizer = optim.Adam(network.parameters(), lr=0.0001)

loss_min = np.inf

num_epochs = 10

start_time = time.time()

for epoch in range(1,num_epochs+1):

loss_train = 0

loss_test = 0

running_loss = 0

network.train()

print('size of train loader is: ', len(train_loader))

for step in range(1, len(train_loader)+1):

batch = next(iter(train_loader))

images, landmarks = batch['image'], batch['landmarks']

print(images.shape)

images = images.unsqueeze_(1)

images = torch.cat((images,images,images),1)

images = images.cuda()

landmarks = landmarks.view(landmarks.size(0),-1).cuda()

norm_image = transforms.Normalize(0.3812, 0.1123)

for image in images:

image = image.float()

##image = to_tensor(image) #TypeError: pic should be PIL Image or ndarray. Got <class 'torch.Tensor'>

image = norm_image(image)

###removing landmarks normalize because of the following error

###ValueError: Expected tensor to be a tensor image of size (C, H, W). Got tensor.size() = torch.Size([8, 8])

for i in range(8):

if(i%2==0):

landmarks[:,i] = landmarks[:,i]/800

else:

landmarks[:,i] = landmarks[:,i]/600

print(landmarks.shape)

print(landmarks)

##norm_landmarks = transforms.Normalize(0.4949, 0.2165)

landmarks [landmarks != landmarks] = 0

landmarks = landmarks.unsqueeze_(0)

landmarks = norm_landmarks(landmarks)

predictions = network(images)

# clear all the gradients before calculating them

optimizer.zero_grad()

print('predictions are: ', predictions.float())

print('landmarks are: ', landmarks.float())

# find the loss for the current step

loss_train_step = criterion(predictions.float(), landmarks.float())

loss_train_step = loss_train_step.to(torch.float32)

print("loss_train_step before backward: ", loss_train_step)

# calculate the gradients

loss_train_step.backward()

# update the parameters

optimizer.step()

print("loss_train_step after backward: ", loss_train_step)

loss_train += loss_train_step.item()

print("loss_train: ", loss_train)

running_loss = loss_train/step

print('step: ', step)

print('running loss: ', running_loss)

print_overwrite(step, len(train_loader), running_loss, 'train')

network.eval()

with torch.no_grad():

for step in range(1,len(test_loader)+1):

batch = next(iter(train_loader))

images, landmarks = batch['image'], batch['landmarks']

images = images.cuda()

landmarks = landmarks.view(landmarks.size(0),-1).cuda()

##[8, 600, 800] --> [8,3,600,800]

images = images.unsqueeze(1)

images = torch.cat((images, images, images), 1)

predictions = network(images)

# find the loss for the current step

loss_test_step = criterion(predictions, landmarks)

loss_test += loss_test_step.item()

running_loss = loss_test/step

print_overwrite(step, len(test_loader), running_loss, 'Validation')

loss_train /= len(train_loader)

loss_test /= len(test_loader)

print('\n--------------------------------------------------')

print('Epoch: {} Train Loss: {:.4f} Valid Loss: {:.4f}'.format(epoch, loss_train, loss_test))

print('--------------------------------------------------')

if loss_test < loss_min:

loss_min = loss_test

torch.save(network.state_dict(), '../moth_landmarks.pth')

print("\nMinimum Valid Loss of {:.4f} at epoch {}/{}".format(loss_min, epoch, num_epochs))

print('Model Saved\n')

print('Training Complete')

print("Total Elapsed Time : {} s".format(time.time()-start_time))

And I have the following code in the evaluation cell:

start_time = time.time()

with torch.no_grad():

best_network = Network()

best_network.cuda()

best_network.load_state_dict(torch.load('../moth_landmarks.pth'))

best_network.eval()

batch = next(iter(train_loader))

images, landmarks = batch['image'], batch['landmarks']

images = images.cuda()

landmarks = (landmarks + 0.5) * 224

##[8, 600, 800] --> [8,3,600,800]

images = images.unsqueeze(1)

images = torch.cat((images, images, images), 1)

predictions = (best_network(images).cpu() + 0.5) * 224

predictions = predictions.view(-1,4,2)

plt.figure(figsize=(10,40))

for img_num in range(8):

plt.subplot(8,1,img_num+1)

plt.imshow(images[img_num].cpu().numpy().transpose(1,2,0).squeeze(), cmap='gray')

plt.scatter(predictions[img_num,:,0], predictions[img_num,:,1], c = 'r')

plt.scatter(landmarks[img_num,:,0], landmarks[img_num,:,1], c = 'g')

print('Total number of test images: {}'.format(len(test_dataset)))

end_time = time.time()

print("Elapsed Time : {}".format(end_time - start_time))

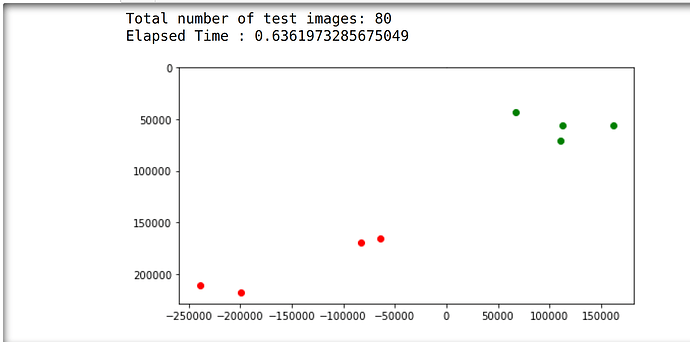

These are the predictions I am getting (the green ones are the ground truth):

I am not sure what is the main reason these predictions are so off and how to fix them.

The line:

landmarks = (landmarks + 0.5) * 224

is taken from Face Landmarks Detection | Thecleverprogrammer

This is what I have in the network section:

num_classes = 4 * 2 #4 coordinates X and Y flattened --> 4 of 2D keypoints or landmarks

class Network(nn.Module):

def __init__(self,num_classes=8):

super().__init__()

self.model_name = 'resnet18'

self.model = models.resnet18()

self.model.fc = nn.Linear(self.model.fc.in_features, num_classes)

def forward(self, x):

x = x.float()

out = self.model(x)

return out

Additionally, do you know how to apply:

for i in range(8):

if(i%2==0):

landmarks[:,i] = landmarks[:,i]/800

else:

landmarks[:,i] = landmarks[:,i]/600

That I have in train to evaluation cell?