Hello, I was recently doing some image pre-processing using Pytorch. I use PIL to load a 1 channel image from disk, then I use PIL.ImageStat(img).mean and PIL.ImageStat(img).stddev to obtain the mean and standard deviation of this image. After this, i converted the PIL image into tensor by using torchvison.transforms.ToTensor() . Finally, I use torchvison.transforms.Normalize() to normalize the tensor image.

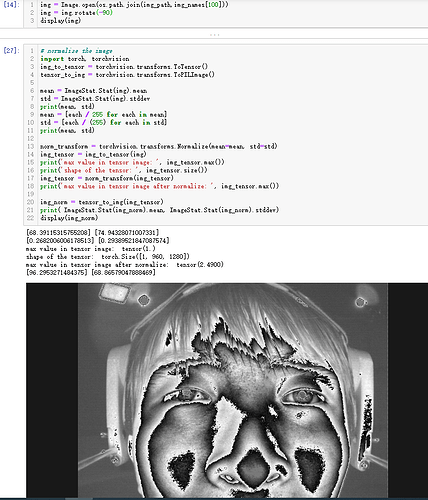

The problem is, the value of the maximum pixel of the tensor image will above 1.0. And the normalized image looks wierd. Did I make any mistakes?

Many thanks!

1 Like

transforms.Normalize will use the provided mean and std to create a tensor with zero mean and unit variance, so the values might take values outside of [-1, 1].

Your output might be clipped by by the display function, which would create these saturated regions.

Thank you ! Then, in this case, is it correct to do normalization before I send the tensor image to CNN?

That’s the standard approach, as it often helps in your training.