I’m currently training NLP classification models using BERT-pytorch on my 1080-TI GPUs.

In Windows Task Manager, the Performance tab often shows my GPUs with extremely low utilization. The GPU memory usage goes up in an expected way, but utilization always appears to be very low (0-5%).

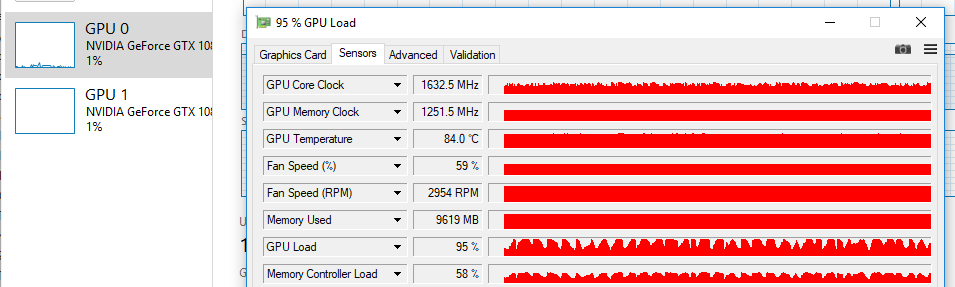

Interestingly, when I use a different monitoring app, like GPU-Z from techpowerup, the utilization on my GPUs shows much more expected numbers, getting 50-100% GPU load.

I’m wondering if there’s any insight into the discrepency between GPU monitoring.