Hey guys,

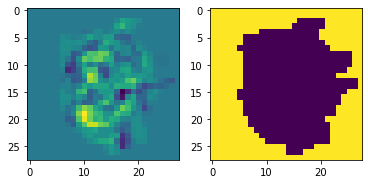

I have a very simple model but I’m struggling in understanding why I obtain zero gradients for the first layer and very lowee gradients for the last one. My code is:

import os

import torch

from torch import nn

from torch import optim

from torch.utils.data import DataLoader

from torchvision import datasets, transforms

device = ‘cuda’ if torch.cuda.is_available() else ‘cpu’

training_set = datasets.MNIST(

root="MNIST",

train=True,

download=True,

transform=transforms.ToTensor()

)

test_set = datasets.MNIST(

root="MNIST",

train=False,

download=True,

transform=transforms.ToTensor()

)

train_dataloader = DataLoader(training_set, batch_size=64, shuffle=True)

test_dataloader = DataLoader(test_set, batch_size=64, shuffle=True)

model = nn.Sequential(

nn.Linear(28*28, 50),

nn.ReLU(),

nn.Linear(50, 10)

)

optimizer = optim.SGD(model.parameters(), lr=0.1)

criterion = nn.CrossEntropyLoss()

input, output = next(iter(train_dataloader))

input = input.view(input.shape[0], input.shape[2]*input.shape[3])

optimizer.zero_grad()

logits = model(input)

loss = criterion(logits, output)

loss.backward()

optimizer.step()

Someone can spot the mistake? Thank you