Really? Including .clone()? I’ve seen it my graph and thought that was super strange (related question: Why is the clone operation part of the computation graph? Is it even differentiable?).

#%%

## Q:

import torch

from torchviz import make_dot

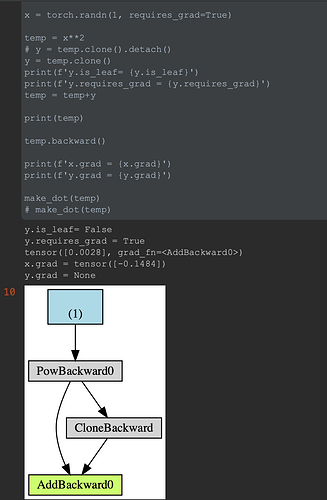

x = torch.randn(1, requires_grad=True)

temp = x**2

# y = temp.clone().detach()

y = temp.clone()

print(f'y.is_leaf= {y.is_leaf}')

print(f'y.requires_grad = {y.requires_grad}')

temp = temp+y

print(temp)

temp.backward()

print(f'x.grad = {x.grad}')

print(f'y.grad = {y.grad}')

make_dot(temp)

# make_dot(temp)