Hi, I am training a custom CNN, I need to use a linear activation function. but I didn’t find anything in pytorch. I khow this activation just pass the input to the output of it, so should I use nn.Linear(nin, nin) or nn.Identity() or do nothing?

while I am training my network, the training and validation is nearly constant and I think this is cause of bad usage of my activation functions

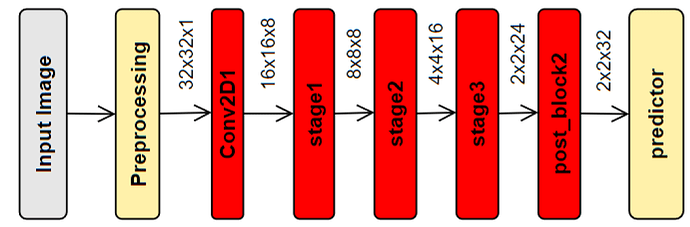

Here is my model:

and here is my code:

class Block(nn.Module):

def __init__(self, in_channels, out_channels, exp=1, stride=1, type=''):

super().__init__()

self.t = type

self.stride = stride

self.inc, self.outc = in_channels, out_channels

self.exp = exp

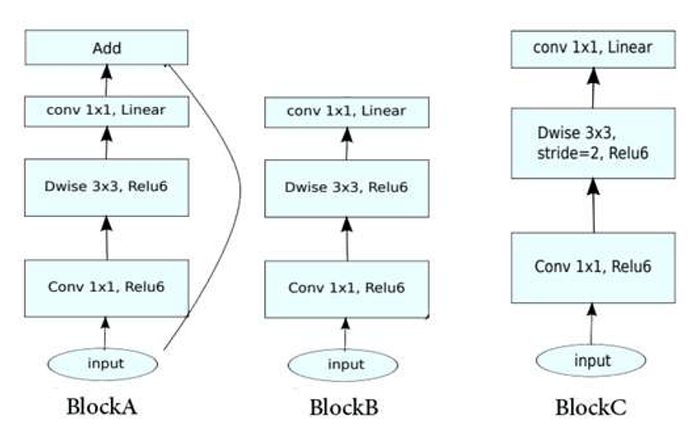

self.blockc = nn.Sequential(

nn.Conv2d(self.inc, self.inc* self.exp, kernel_size=1),

nn.ReLU6(inplace=True),

nn.Conv2d(self.inc * self.exp, self.inc * self.exp, kernel_size=3, groups= self.inc * self.exp, stride= self.stride, padding=1),

nn.ReLU6(inplace=True),

nn.Conv2d(self.inc * self.exp, self.outc, kernel_size=1),

nn.Identity())#,

def forward(self, x):

out = self.blockc(x)

if self.t == 'A':

out = torch.add(out,x)

return out

class Model(nn.Module):

def __init__(self):

super().__init__()

self.conv2d1 = nn.Conv2d(in_channels=1, out_channels=8, kernel_size=3,padding=1, stride=2)

self.r = nn.ReLU6()

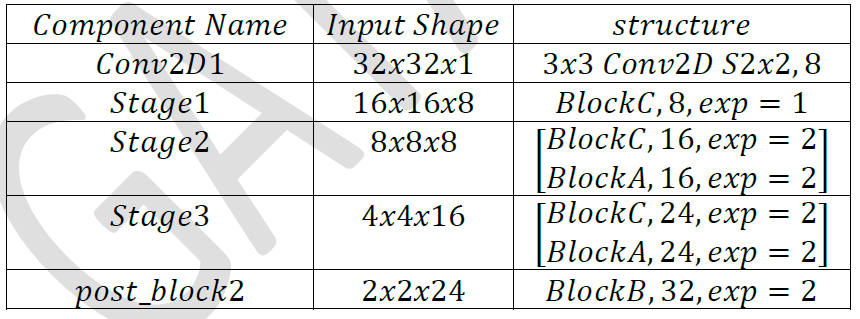

self.stage1 = Block(8, 8, exp=1, stride=2, type='C')

self.stage2 = nn.Sequential(

Block(8, 16, exp=2, stride=2, type='C'),

Block(16, 16, exp=2, type='A'))

self.stage3 = nn.Sequential(

Block(16, 24, exp=2, stride=2, type='C'),

Block(24, 24, exp=2, type='A'))

self.post_block2 = Block(24, 32, exp=2, type='B')

self.fc = nn.Linear(128, 10)

def forward(self, x):

out = self.conv2d1(x)

out = self.r(out)

out = self.stage1(out)

out = self.stage2(out)

out = self.stage3(out)

out = self.post_block2(out)

out = out.view(-1, 128)

out = self.fc(out)

return out