Hello guys:

I try to run a machine learning program with NVIDIA RTX A6000 graphical cards and Pytorch, but confronted with the following problem:

Traceback (most recent call last):

File"D:\jktong\LCNIl-FIT-exp-size\250by250\Train_directions_complex_gpu_large_u7.3.py", line 223, in loss.backward()

File “c: \Users \hplanaconda3\libisite-packages \torch\tensor.py " , line 245,in backward torch.autograd.backward(self, gradient,retain_graph,create_graph,inputs=inputs)

File"c:\Users\hplanaconda3\liblsite-packages \torchlautogradl_init_.py",line 145,in backward variable._execution_engine.run_backward(

RuntimeError: cuDNN error: CUDNN_STATUS_NOT_INITIALIZED

The program can be run smoothly on three NVIDIA RTX 2080Ti graphical cards, so it seems that our program has no problems.

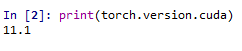

The cuda version of our workstation is 11.1, cudnn version is 11.3 and pytorch version is 1.8.2.

I have tried to search for the recommended version of pytorch with this graphical cards, but it seems this card is too new, could you please give us some suggestions to solve the problem (like recommend a suitable version of pytorch for this GPU)? Thank you!